By Mouhamadou Diaw

Oracle 21c is actually released in the cloud, and I did some tests to setup a Grid infrastructure cluster with two nodes.

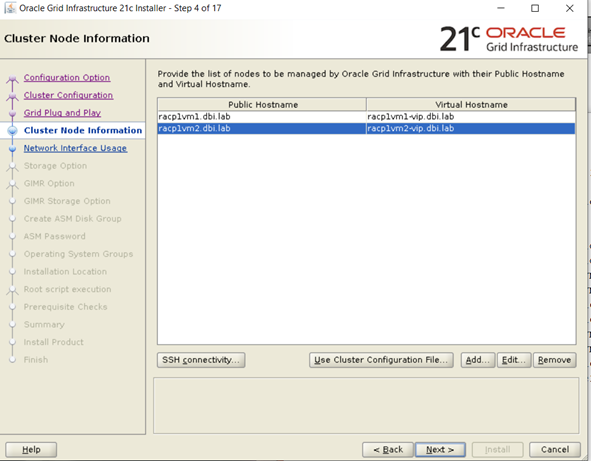

I used following two VM servers to test

racp1vm1

racp1vm2

Below the addresses I am using. Note that a dns server is setup

|

1

2

3

4

5

6

|

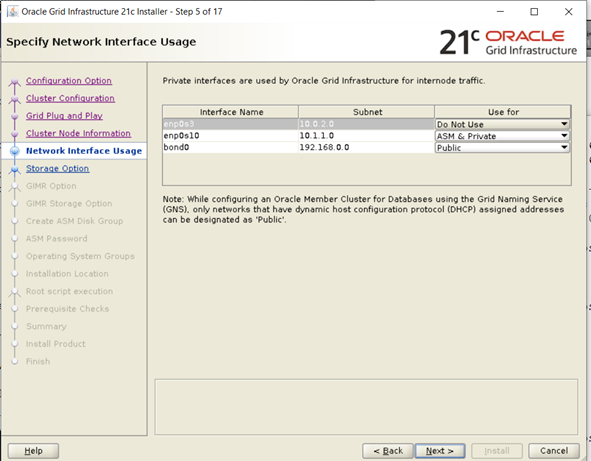

192.168.0.101 racp1vm1.dbi.lab racp1vm1 --public network192.168.0.103 racp1vm2.dbi.lab racp1vm2 --public network192.168.0.102 racp1vm1-vip.dbi.lab racp1vm1-vip --vitual network192.168.0.104 racp1vm2-vip.dbi.lab racp1vm2-vip --virtual network10.1.1.1 racp1vm1-priv.dbi.lab racp1vm1-priv --private network10.1.1.2 racp1vm2-priv.dbi.lab racp1vm2-priv --private network |

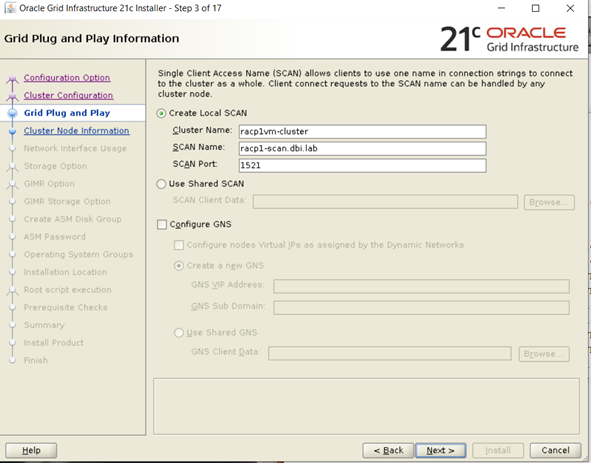

The scan name should be resolved in a round-robin method. Every time the nslookup command should return a different IP as first address

|

1

2

3

|

racp1-scan.dbi.lab : 192.168.0.105racp1-scan.dbi.lab : 192.168.0.106racp1-scan.dbi.lab . 192.168.0.107 |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

|

[root@racp1vm1 diag]# nslookup racp1-scanServer: 192.168.0.100Address: 192.168.0.100#53Name: racp1-scan.dbi.labAddress: 192.168.0.105Name: racp1-scan.dbi.labAddress: 192.168.0.107Name: racp1-scan.dbi.labAddress: 192.168.0.106[root@racp1vm1 diag]# nslookup racp1-scanServer: 192.168.0.100Address: 192.168.0.100#53Name: racp1-scan.dbi.labAddress: 192.168.0.107Name: racp1-scan.dbi.labAddress: 192.168.0.106Name: racp1-scan.dbi.labAddress: 192.168.0.105[root@racp1vm1 diag]# nslookup racp1-scanServer: 192.168.0.100Address: 192.168.0.100#53Name: racp1-scan.dbi.labAddress: 192.168.0.106Name: racp1-scan.dbi.labAddress: 192.168.0.105Name: racp1-scan.dbi.labAddress: 192.168.0.107[root@racp1vm1 diag]# |

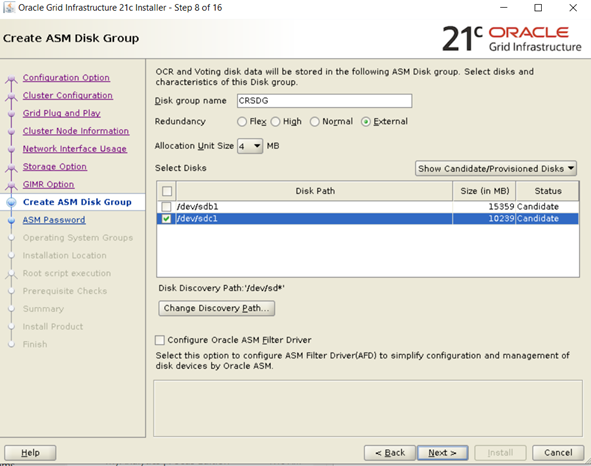

I used udev for the ASM disks and below the contents of my udev file

|

1

2

3

|

[root@racp1vm1 install]# cat /etc/udev/rules.d/90-oracle-asm.rulesOracle ASM devicesKERNEL==\”sd[b-f]1", OWNER="grid", GROUP="asmadmin", MODE="0660" |

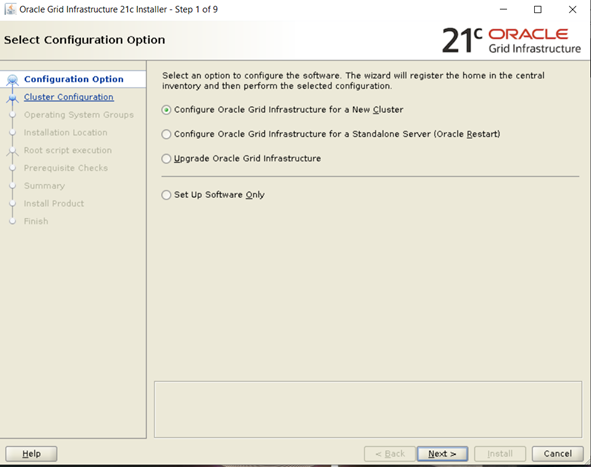

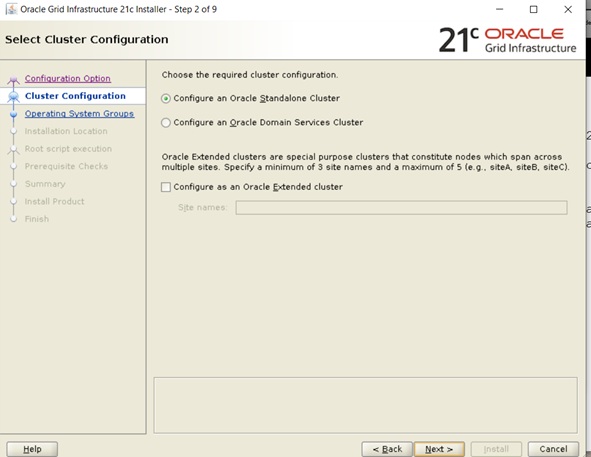

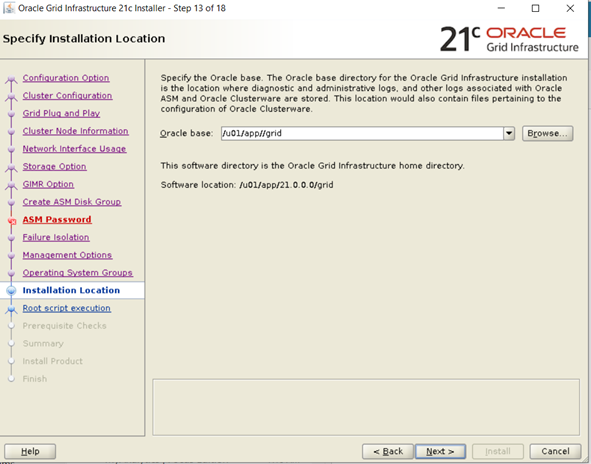

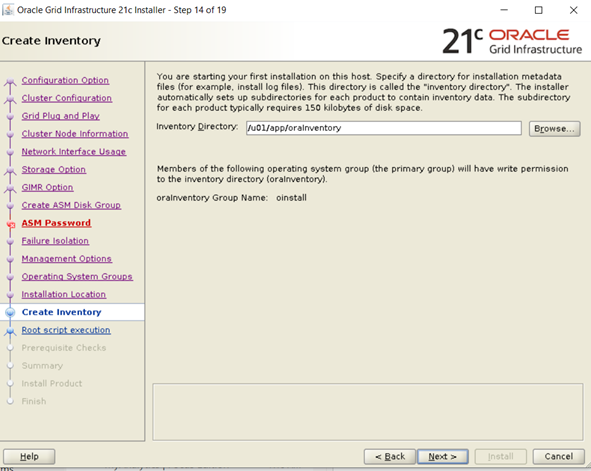

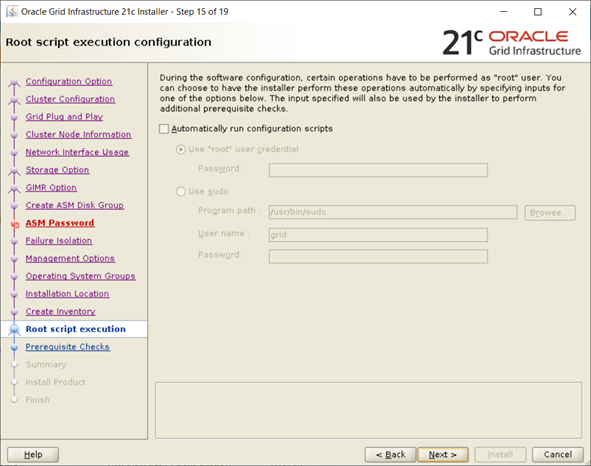

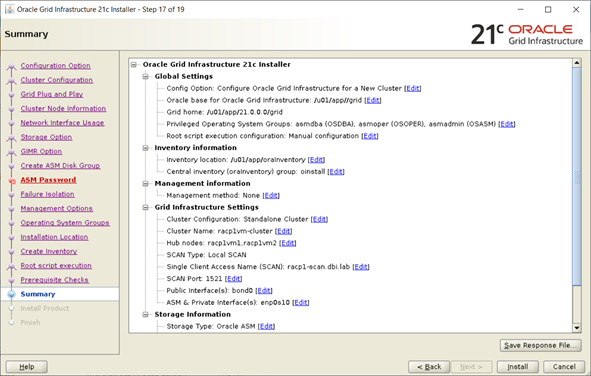

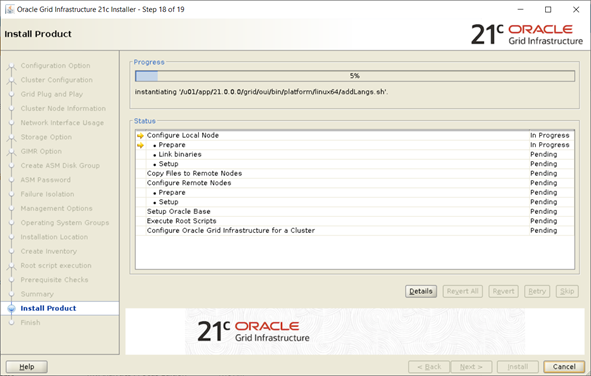

The installation is the same that for the 19c. Unzip your software in your GRID_HOME

|

1

2

|

[grid@racp1vm1 ~]$ mkdir -p /u01/app/21.0.0.0/grid[grid@racp1vm1 ~]$ unzip -d /u01/app/21.0.0.0/grid grid_home-zip.zip |

And run the gridSetup.sh command

|

1

|

[grid@racp1vm1 grid]$ ./gridSetup.sh |

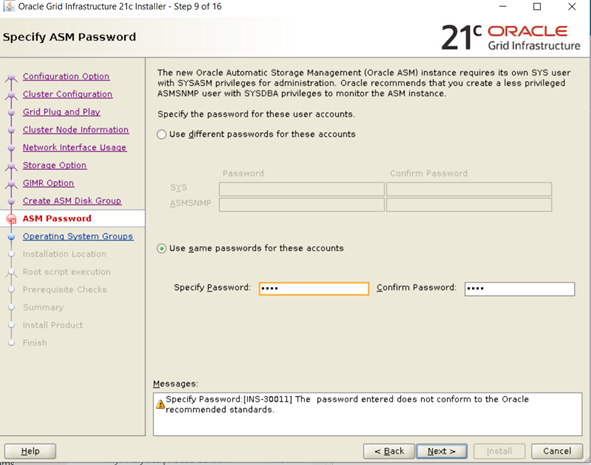

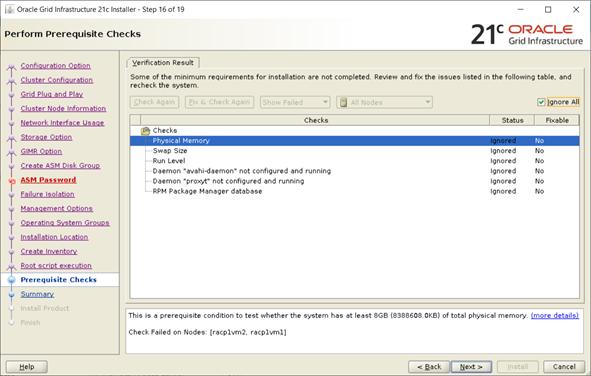

I got some warnings i decided to ignore and go ahead

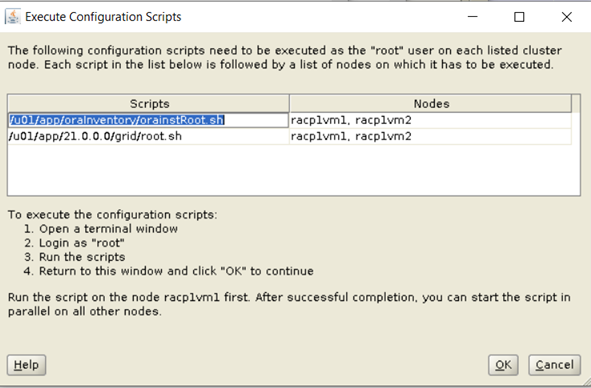

As specified I executed the scripts on both nodes

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

[root@racp1vm1 ~]# /u01/app/oraInventory/orainstRoot.shChanging permissions of /u01/app/oraInventory.Adding read,write permissions for group.Removing read,write,execute permissions for world.Changing groupname of /u01/app/oraInventory to oinstall.The execution of the script is complete.[root@racp1vm1 ~]#[root@racp1vm2 ~]# /u01/app/oraInventory/orainstRoot.shChanging permissions of /u01/app/oraInventory.Adding read,write permissions for group.Removing read,write,execute permissions for world.Changing groupname of /u01/app/oraInventory to oinstall.The execution of the script is complete.[root@racp1vm2 ~]# |

Below truncated outputs of root.sh.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

[root@racp1vm1 ~]# /u01/app/21.0.0.0/grid/root.shPerforming root user operation.The following environment variables are set as: ORACLE_OWNER= grid ORACLE_HOME= /u01/app/21.0.0.0/gridEnter the full pathname of the local bin directory: [/usr/local/bin]:…..CRS-4256: Updating the profileSuccessful addition of voting disk 39600544f8794f63bfb83f128d9a9079.Successfully replaced voting disk group with +CRSDG.CRS-4256: Updating the profileCRS-4266: Voting file(s) successfully replaced## STATE File Universal Id File Name Disk group-- ----- ----------------- --------- --------- 1. ONLINE 39600544f8794f63bfb83f128d9a9079 (/dev/sdc1) [CRSDG]Located 1 voting disk(s).2021/02/08 15:05:06 CLSRSC-594: Executing installation step 17 of 19: 'StartCluster'.2021/02/08 15:06:00 CLSRSC-343: Successfully started Oracle Clusterware stack2021/02/08 15:06:00 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'.2021/02/08 15:08:06 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'.2021/02/08 15:08:27 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

|

[root@racp1vm2 install]# /u01/app/21.0.0.0/grid/root.shPerforming root user operation.The following environment variables are set as: ORACLE_OWNER= grid ORACLE_HOME= /u01/app/21.0.0.0/gridEnter the full pathname of the local bin directory: [/usr/local/bin]:The contents of "dbhome" have not changed. No need to overwrite. Copying oraenv to /usr/local/bin ...The file "coraenv" already exists in /usr/local/bin. Overwrite it? (y/n)[n]: y……2021/02/08 15:15:00 CLSRSC-343: Successfully started Oracle Clusterware stack2021/02/08 15:15:00 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'.2021/02/08 15:15:20 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'.2021/02/08 15:15:27 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded[root@racp1vm2 install]# |

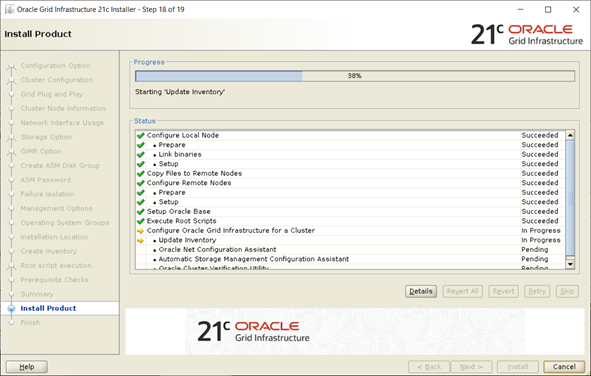

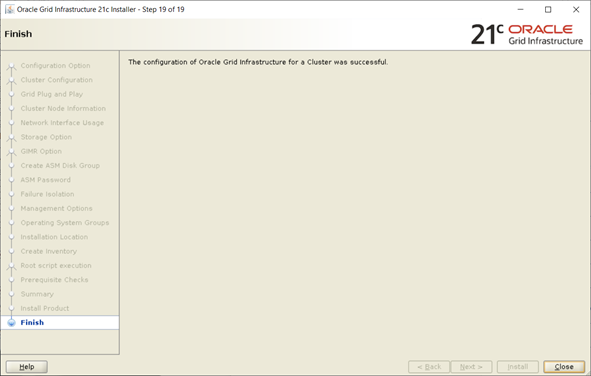

Then click OK

You can verify that the installation was fine

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

[root@racp1vm1 diag]# /u01/app/21.0.0.0/grid/bin/crsctl query crs activeversionOracle Clusterware active version on the cluster is [21.0.0.0.0][root@racp1vm1 diag]#[root@racp1vm1 diag]# /u01/app/21.0.0.0/grid/bin/crsctl check cluster -all**************************************************************racp1vm1:CRS-4537: Cluster Ready Services is onlineCRS-4529: Cluster Synchronization Services is onlineCRS-4533: Event Manager is online**************************************************************racp1vm2:CRS-4537: Cluster Ready Services is onlineCRS-4529: Cluster Synchronization Services is onlineCRS-4533: Event Manager is online**************************************************************[root@racp1vm1 diag]# |

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

|

[root@racp1vm1 diag]# /u01/app/21.0.0.0/grid/bin/crsctl stat res -t--------------------------------------------------------------------------------Name Target State Server State details--------------------------------------------------------------------------------Local Resources--------------------------------------------------------------------------------ora.LISTENER.lsnr ONLINE ONLINE racp1vm1 STABLE ONLINE ONLINE racp1vm2 STABLEora.chad ONLINE ONLINE racp1vm1 STABLE ONLINE ONLINE racp1vm2 STABLEora.net1.network ONLINE ONLINE racp1vm1 STABLE ONLINE ONLINE racp1vm2 STABLEora.ons ONLINE ONLINE racp1vm1 STABLE ONLINE ONLINE racp1vm2 STABLEora.proxy_advm OFFLINE OFFLINE racp1vm1 STABLE OFFLINE OFFLINE racp1vm2 STABLE--------------------------------------------------------------------------------Cluster Resources--------------------------------------------------------------------------------ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup) 1 ONLINE ONLINE racp1vm1 STABLE 2 ONLINE ONLINE racp1vm2 STABLEora.CRSDG.dg(ora.asmgroup) 1 ONLINE ONLINE racp1vm1 STABLE 2 ONLINE ONLINE racp1vm2 STABLEora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE racp1vm1 STABLEora.LISTENER_SCAN2.lsnr 1 ONLINE ONLINE racp1vm1 STABLEora.LISTENER_SCAN3.lsnr 1 ONLINE ONLINE racp1vm2 STABLEora.asm(ora.asmgroup) 1 ONLINE ONLINE racp1vm1 Started,STABLE 2 ONLINE ONLINE racp1vm2 Started,STABLEora.asmnet1.asmnetwork(ora.asmgroup) 1 ONLINE ONLINE racp1vm1 STABLE 2 ONLINE ONLINE racp1vm2 STABLEora.cdp1.cdp 1 ONLINE ONLINE racp1vm1 STABLEora.cdp2.cdp 1 ONLINE ONLINE racp1vm1 STABLEora.cdp3.cdp 1 ONLINE ONLINE racp1vm2 STABLEora.cvu 1 ONLINE ONLINE racp1vm1 STABLEora.qosmserver 1 ONLINE ONLINE racp1vm1 STABLEora.racp1vm1.vip 1 ONLINE ONLINE racp1vm1 STABLEora.racp1vm2.vip 1 ONLINE ONLINE racp1vm2 STABLEora.scan1.vip 1 ONLINE ONLINE racp1vm1 STABLEora.scan2.vip 1 ONLINE ONLINE racp1vm1 STABLEora.scan3.vip 1 ONLINE ONLINE racp1vm2 STABLE--------------------------------------------------------------------------------[root@racp1vm1 diag]# |

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/oracle-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/10/STS_web-min-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2025/05/JDE_Web-1-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2023/01/APY_web-scaled.jpg)