In a previous blog, I spoke about snapshot, restore, and briefly about Snapshot Lifecycle Management. In this blog, I will go more in deep in SLM.

Snapshot lifecycle management (SLM) is the easiest way to regularly back up a cluster. An SLM policy automatically takes snapshots on a preset schedule. The policy can also delete snapshots based on retention rules you define.

Please note that Elasticsearch Service deployments automatically include the cloud-snapshot-policy SLM policy. Elasticsearch Service uses this policy to take periodic snapshots of your cluster.

SLM security

The following cluster privileges control access to the SLM actions when Elasticsearch security features are enabled:

- manage_slm : Allows a user to perform all SLM actions, including creating and updating policies and starting and stopping SLM.

- read_slm : Allows a user to perform all read-only SLM actions, such as getting policies and checking the SLM status.

- cluster:admin/snapshot/* : Allows a user to take and delete snapshots of any index, whether or not they have access to that index.

You can create and manage roles to assign these privileges through Kibana Management.

To grant the privileges necessary to create and manage SLM policies and snapshots, you can set up a role with the manage_slm and cluster:admin/snapshot/* cluster privileges and full access to the SLM history indices.

For example, the following request creates an slm-admin role:

POST _security/role/slm-admin

{

"cluster": [ "manage_slm", "cluster:admin/snapshot/*" ],

"indices": [

{

"names": [ ".slm-history-*" ],

"privileges": [ "all" ]

}

]

}

To grant read-only access to SLM policies and the snapshot history, you can set up a role with the read_slm cluster privilege and read access to the snapshot lifecycle management history indices.

For example, the following request creates a slm-read-only role:

POST _security/role/slm-read-only

{

"cluster": [ "read_slm" ],

"indices": [

{

"names": [ ".slm-history-*" ],

"privileges": [ "read" ]

}

]

}

Create an SLM policy

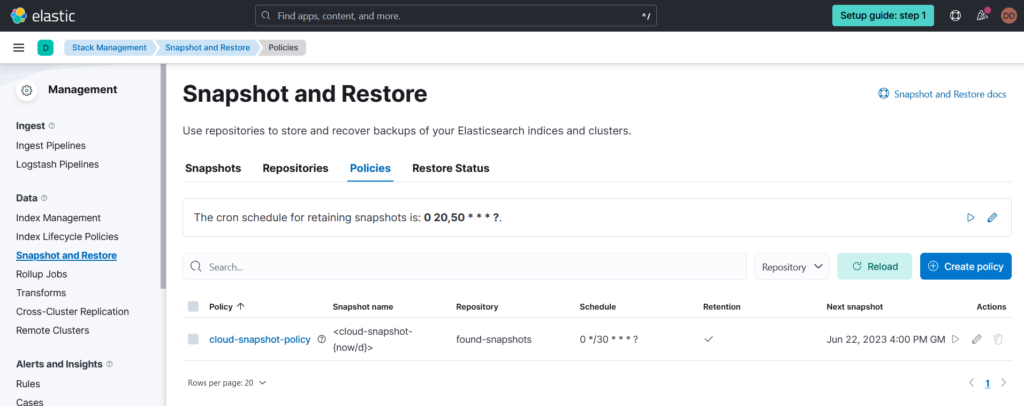

To manage SLM in Kibana, go to the main menu and click Stack Management > Snapshot and Restore > Policies. To create a policy, click Create policy.

You can also manage SLM using the SLM APIs. To create a policy, use the create SLM policy API.

The following request creates a policy that backs up the cluster state, all data streams, and all indices daily at 1:30 a.m. UTC

PUT _slm/policy/nightly-snapshots

{

"schedule": "0 30 1 * * ?",

"name": "<nightly-snap-{now/d}>",

"repository": "my_repository",

"config": {

"indices": "*",

"include_global_state": true

},

"retention": {

"expire_after": "30d",

"min_count": 5,

"max_count": 50

}

}

- schedule: When to take snapshots, written in Cron syntax.

- name: Supports date math. To prevent naming conflicts, the policy also appends a UUID to each snapshot name.

- repository: Registered snapshot repository used to store the policy’s snapshots.

- config: Data streams and indices to include in the policy’s snapshots.

- include_global_state: If true, the policy’s snapshots include the cluster state.

- retention: This configuration keeps snapshots for 30 days, retaining at least 5 and no more than 50 snapshots regardless of age.

Manually run an SLM policy

You can manually run an SLM policy to immediately create a snapshot. This is useful for testing a new policy or taking a snapshot before an upgrade. Manually running a policy doesn’t affect its snapshot schedule.

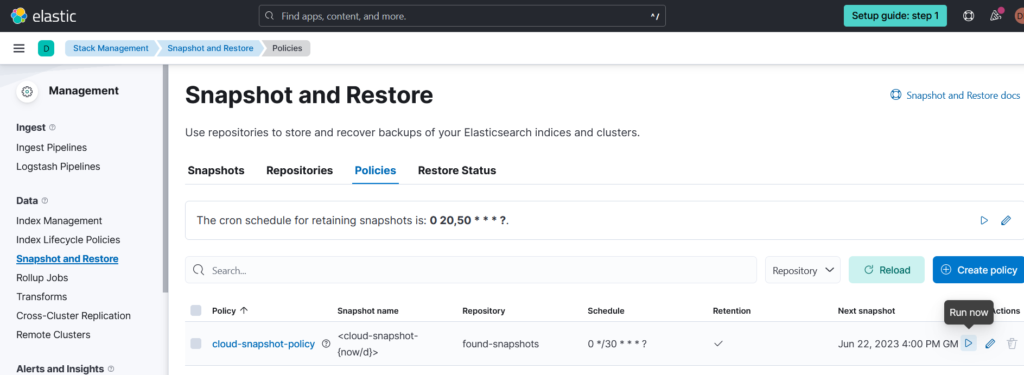

To run a policy in Kibana, go to the Policies page and click the run icon under the Actions column.

You can also use the execute SLM policy API.

POST _slm/policy/nightly-snapshots/_execute

Please note that the snapshot process runs in the background. To monitor any currently running snapshots, use the get snapshot API with the _current request path parameter.

GET _snapshot/my_repository/_current

SLM Retention

SLM snapshot retention is a cluster-level task that runs separately from a policy’s snapshot schedule. To control when the SLM retention task runs, configure the slm.retention_schedule cluster setting.

PUT _cluster/settings

{

"persistent" : {

"slm.retention_schedule" : "0 30 1 * * ?"

}

}

To immediately run the retention task, execute:

POST _slm/_execute_retention

Please note that an SLM policy’s retention rules only apply to snapshots created using the policy. Other snapshots don’t count toward the policy’s retention limits.

These are the most important points to know about SLM, you know how to create, configure, execute, secure SLM. This is very helpful feature I hope this blog will be helpful, if you have any question, don’t hesitate to ask 🙂

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/09/DDI_web-min-scaled.jpg)