This is something that I waited for a while, in fact since SQL Server 2017 … and the news came out on Wednesday 09th September 2019. Running Non-Root SQL Server Containers is now possible either on the next version of SQL Server (2019) and it has been backported on SQL Server 2017 as well. Non-root SQL Server containers will likely be part of hidden gem of SQL Server new features, but this is definitely a good news for me because it will facilitate the transition of SQL Server containers on production from a security standpoint.

At this stage, no need to precise why it is not a best practice to run SQL Server containers or more generally speaking applications with root privileges within a container. For further information, I invite you to take a look at the different threats implied by a such configuration with your google-fu.

Let’s start with docker environments. First, Microsoft provides a Docker file to build an image either for SQL Server 2017 and SQL Server 2019. We may notice the Docker file is already based on a SQL Server docker image and performs some extra configuration for non-root privilege capabilities. I put here the interesting part:

# Exmple of creating a SQL Server 2019 container image that will run as a user 'mssql' instead of root # This is example is based on the official image from Microsoft and effectively changes the user that SQL Server runs as # and allows for dumps to generate as a non-root user FROM mcr.microsoft.com/mssql/server:2019-latest # Create non-root user and update permissions # RUN useradd -M -s /bin/bash -u 10001 -g 0 mssql RUN mkdir -p -m 770 /var/opt/mssql && chgrp -R 0 /var/opt/mssql # Grant sql the permissions to connect to ports <1024 as a non-root user # RUN setcap 'cap_net_bind_service+ep' /opt/mssql/bin/sqlservr # Allow dumps from the non-root process # RUN setcap 'cap_sys_ptrace+ep' /opt/mssql/bin/paldumper RUN setcap 'cap_sys_ptrace+ep' /usr/bin/gdb # Add an ldconfig file because setcap causes the os to remove LD_LIBRARY_PATH # and other env variables that control dynamic linking # RUN mkdir -p /etc/ld.so.conf.d && touch /etc/ld.so.conf.d/mssql.conf RUN echo -e "# mssql libs\n/opt/mssql/lib" >> /etc/ld.so.conf.d/mssql.conf RUN ldconfig USER mssql CMD ["/opt/mssql/bin/sqlservr"]

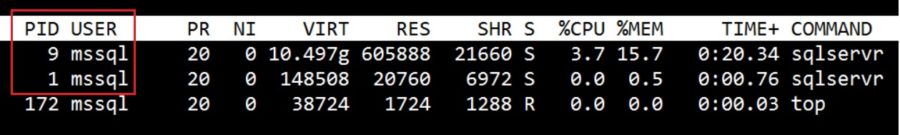

Note the different sections where the mssql user is created and is used when running the image. So, the new image specification implies running the sqlservr process using this mssql user as shown below:

$ docker exec -ti sql19 top

The user process is well identified by its name because it is already defined in the /etc/password file within the container namespace:

$ docker exec -ti sql19 cat /etc/passwd | grep mssql mssql:x:10001:0::/home/mssql:/bin/bash

Let’s go ahead and let’s talk about persisting SQL Server database files on an external storage. In this case, we need to refer to the Microsoft documentation to configure volumes and underlying storage permissions regarding the scenario we will have to deal with.

If you don’t specify any user (and group) when spinning up the container, the sqlservr process will run with the identity of the mssql user created inside the container and as part of the root group. The underlying host filesystem must be configured accordingly, either a user with same UID = 10001 or the root group GUID = 0). Otherwise chances are you will experience permission issues with the following error message:

SQL Server 2019 will run as non-root by default. This container is running as user mssql. To learn more visit https://go.microsoft.com/fwlink/?linkid=2099216. /opt/mssql/bin/sqlservr: Error: Directory [/var/opt/mssql/system/] could not be created. Errno [13]

If you want to run the container as part of a custom user and group created on your own, you must be aware of the different database file placement scenarios. The first one consists in using the default configuration with all the SQL Server logs, data and transaction log files in /var/opt/mssql path. In this case, your custom user UID and GUID can be part of the security context of the hierarchy folder on the host as follows:

$ ls -l | grep sqlserver drwxrwx---. 6 mssql mssql 59 Sep 27 19:08 sqlserver $ id mssql uid=1100(mssql) gid=1100(mssql) groups=1100(mssql),100(users)

The docker command below specifies the UID and GUID of my custom user through the -u parameter:

docker run -d \ --name sql19 \ -u $(id -u mssql):$(id -g mssql) \ -e "MSSQL_PID=Developer" \ -e "ACCEPT_EULA=Y" \ -e "SA_PASSWORD=Password1" \ -e "MSSQL_AGENT_ENABLED=True" \ -e "MSSQL_LCID=1033" \ -e "MSSQL_MEMORY_LIMIT_MB=2048" \ -v "/u00/sqlserver:/var/opt/mssql" \ -p 1451:1433 -d 2019-latest-non-root

Note the username is missing and replaced by the UID of the mssql user created on my own.

This is a normal behavior because my user is not known within the container namespace. There is no record from my user with UID = 1001. The system only knows the mssql user with UID = 10001 as shown below:

I have no name!@e698c3db2180:/$ whoami whoami: cannot find name for user ID 1100 $ cat /etc/passwd | grep mssql | cut -d":" -f 1,3 mssql:10001

For a sake of curiosity, we may wonder how SQL Server makes the choice of using the correct user for the sqlservr process. Indeed, I created two users with the same name but with different UIDs and I think that after some investigations, taking a look at the uid_entry point definition in the microsoft/mssql-docker github project could help understanding this behavior:

If we don’t specify the UID / GUID during the container’s creation, the whoami command will fail and the mssql user’s UID defined in the Dockerfile (cf. USER mssql) will be chosen.

The second scenario consists in introducing some SQL Server best practices in terms of database file placement. In a previous blog post, I wrote about a possible implementation based on a flexible architecture for SQL Server on Linux and which may fit with containers. In this case, database files will be stored outside of the /var/opt/mssql default path and in this case, the non-root container has the restriction that it must run as part of the root group as mentioned in the Microsoft documentation:

The non-root container has the restriction that it must run as part of the root group unless a volume is mounted to '/var/opt/mssql' that the non-root user can access. The root group doesn’t grant any extra root permissions to the non-root user.

Here my implementation of the flexible architecture template with required Linux permissions in my context:

$ ls -ld /u[0-9]*/sql*2/ drwxrwx---. 2 mssql root 6 Sep 24 22:02 /u00/sqlserver2/ drwxrwx---. 2 mssql root 4096 Sep 27 14:20 /u01/sqlserverdata2/ drwxrwx---. 2 mssql root 25 Sep 27 14:20 /u02/sqlserverlog2/ drwxrwx---. 2 mssql root 6 Sep 24 22:04 /u03/sqlservertempdb2/ drwxrwx---. 2 mssql root 6 Sep 27 10:09 /u98/sqlserver2/

… with:

- /u00/sqlserver2 (binaries structure that will contain remaining files in /var/opt/mssql path)

- /u01/sqlserverdata2 (data files including user, system and tempdb databases)

- /u02/sqlserverlog2 (transaction log files)

- /u98/sqlserver2 (database backups)

And accordingly, my docker command and parameters to start my SQL Server container that will sit on my flexible architecture:

docker run -d \ --name sql19 \ -u $(id -u mssql):0 \ -e "MSSQL_PID=Developer" \ -e "ACCEPT_EULA=Y" \ -e "SA_PASSWORD=Password1" \ -e "MSSQL_AGENT_ENABLED=True" \ -e "MSSQL_LCID=1033" \ -e "MSSQL_MEMORY_LIMIT_MB=2048" \ -e "MSSQL_MASTER_DATA_FILE=/u01/sqlserverdata/master.mdf" \ -e "MSSQL_MASTER_LOG_FILE=/u02/sqlserverlog/mastlog.ldf" \ -e "MSSQL_DATA_DIR=/u01/sqlserverdata" \ -e "MSSQL_LOG_DIR=/u02/sqlserverlog" \ -e "MSSQL_BACKUP_DIR=/u98/sqlserver" \ -v "/u00/sqlserver2:/var/opt/mssql" \ -v "/u01/sqlserverdata2:/u01/sqlserverdata" \ -v "/u02/sqlserverlog2:/u02/sqlserverlog" \ -v "/u98/sqlserver2:/u98/sqlserver" \ -p 1451:1433 -d 2019-latest-non-root

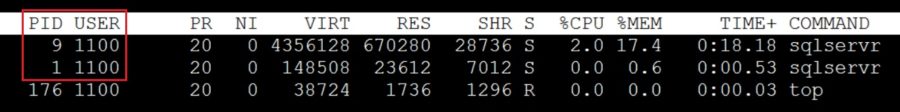

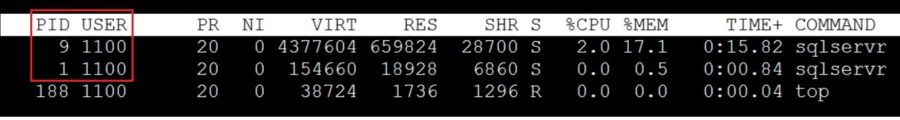

The mssql user created on my own from the host (with UID = 1100) is used by the sqlservr process:

The system and user database files are placed according to my specification:

master> create database test; Commands completed successfully. Time: 0.956s master> \n ldd %% +--------+----------------+---------------------------------+-----------+ | DB | logical_name | physical_name | size_MB | |--------+----------------+---------------------------------+-----------| | master | master | /u01/sqlserverdata/master.mdf | 71 | | master | mastlog | /u02/sqlserverlog/mastlog.ldf | 32 | | tempdb | tempdev | /u01/sqlserverdata/tempdb.mdf | 128 | | tempdb | templog | /u01/sqlserverdata/templog.ldf | 128 | | tempdb | tempdev2 | /u01/sqlserverdata/tempdb2.ndf | 128 | | tempdb | tempdev3 | /u01/sqlserverdata/tempdb3.ndf | 128 | | tempdb | tempdev4 | /u01/sqlserverdata/tempdb4.ndf | 128 | | model | modeldev | /u01/sqlserverdata/model.mdf | 128 | | model | modellog | /u01/sqlserverdata/modellog.ldf | 128 | | msdb | MSDBData | /u01/sqlserverdata/MSDBData.mdf | 236 | | msdb | MSDBLog | /u01/sqlserverdata/MSDBLog.ldf | 12 | | test | test | /u01/sqlserverdata/test.mdf | 128 | | test | test_log | /u02/sqlserverlog/test_log.ldf | 128 | +--------+----------------+---------------------------------+-----------+

I may correlate the above output with corresponding files persisted on underlying storage according to my flexible architecture specification:

$ sudo ls -lR /u[0-9]*/sqlserver*2/ /u00/sqlserver2/: total 4 drwxrwx---. 2 mssql root 4096 Sep 28 17:39 log drwxr-xr-x. 2 mssql root 25 Sep 28 17:39 secrets /u00/sqlserver2/log: total 428 -rw-r-----. 1 mssql root 10855 Sep 28 17:39 errorlog -rw-r-----. 1 mssql root 10856 Sep 28 17:37 errorlog.1 -rw-r-----. 1 mssql root 0 Sep 28 17:37 errorlog.2 -rw-r-----. 1 mssql root 77824 Sep 28 17:37 HkEngineEventFile_0_132141586653320000.xel -rw-r-----. 1 mssql root 77824 Sep 28 17:39 HkEngineEventFile_0_132141587692350000.xel -rw-r-----. 1 mssql root 2560 Sep 28 17:39 log_1.trc -rw-r-----. 1 mssql root 2560 Sep 28 17:37 log.trc -rw-r-----. 1 mssql root 6746 Sep 28 17:37 sqlagent.1 -rw-r-----. 1 mssql root 6746 Sep 28 17:39 sqlagent.out -rw-r-----. 1 mssql root 114 Sep 28 17:39 sqlagentstartup.log -rw-r-----. 1 mssql root 106496 Sep 28 17:37 system_health_0_132141586661720000.xel -rw-r-----. 1 mssql root 122880 Sep 28 17:41 system_health_0_132141587698940000.xel /u00/sqlserver2/secrets: total 4 -rw-------. 1 mssql root 44 Sep 28 17:39 machine-key /u01/sqlserverdata2/: total 105220 -rw-r-----. 1 mssql root 256 Sep 27 14:20 Entropy.bin -rw-r-----. 1 mssql root 4653056 Sep 28 17:39 master.mdf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 modellog.ldf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 model.mdf -rw-r-----. 1 mssql root 14024704 Sep 27 14:20 model_msdbdata.mdf -rw-r-----. 1 mssql root 524288 Sep 27 14:20 model_msdblog.ldf -rw-r-----. 1 mssql root 524288 Sep 27 14:20 model_replicatedmaster.ldf -rw-r-----. 1 mssql root 4653056 Sep 27 14:20 model_replicatedmaster.mdf -rw-r-----. 1 mssql root 15466496 Sep 28 17:39 msdbdata.mdf -rw-r-----. 1 mssql root 786432 Sep 28 17:39 msdblog.ldf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 tempdb2.ndf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 tempdb3.ndf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 tempdb4.ndf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 tempdb.mdf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 templog.ldf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 test.mdf /u02/sqlserverlog2/: total 10240 -rw-r-----. 1 mssql root 2097152 Sep 28 17:39 mastlog.ldf -rw-r-----. 1 mssql root 8388608 Sep 28 17:39 test_log.ldf /u03/sqlservertempdb2/: total 0 /u98/sqlserver2/: total 0

What next? Because in production your containers will run on the top of orchestrator like Kubernetes, the question is how to implement such privilege restriction in this context? Kubernetes provides security context at different levels including pod and containers. In this blog post example, I applied the security context at the container level within the container specification.

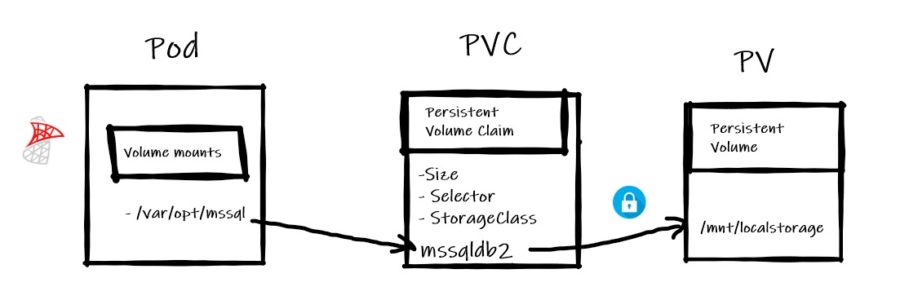

Let’s set the context. Here the picture of my K8s environment:

$ kubectl get nodes NAME STATUS ROLES AGE VERSION k8m.dbi-services.test Ready master 97d v1.14.1 k8n1.dbi-services.test Ready <none> 97d v1.14.1 k8n2.dbi-services.test Ready <none> 97d v1.14.1

I used the new local-storage Storage class (available with K8s v.1.14+):

$ kubectl get sc NAME PROVISIONER AGE local-storage kubernetes.io/no-provisioner 4d $ kubectl describe sc local-storage Name: local-storage IsDefaultClass: No Annotations: <none> Provisioner: kubernetes.io/no-provisioner Parameters: <none> AllowVolumeExpansion: <unset> MountOptions: <none> ReclaimPolicy: Delete VolumeBindingMode: WaitForFirstConsumer Events: <none>

I configured a persistent volume based on this local-storage class and that pointing to the /mnt/local-storage on my K81n node. The access mode and Retain policy are configured according to meet the best practices for databases.

$ cat StoragePV.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: my-local-pv

spec:

capacity:

storage: 5Gi

accessModes:

- ReadWriteOnce

persistentVolumeReclaimPolicy: Retain

volumeMode: Filesystem

storageClassName: local-storage

local:

path: /mnt/localstorage

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

- k8n1.dbi-services.test

For a sake of simplicity, I applied the default configuration with all SQL Server related files related stored in /var/opt/mssql. I configured the underlying storage and folder permissions accordingly with my custom mssql user (UID = 10001) and group (GUID = 10001) created on the K8n1 host. Note that the UID matches with that of the mssql user created within the container.

$ hostname k8n1.dbi-services.test $ id mssql uid=10001(mssql) gid=10001(mssql) groups=10001(mssql) $ ls -ld /mnt/localstorage/ drwxrwx--- 6 mssql mssql 59 Sep 26 20:57 /mnt/localstorage/

My deployment file is as follows. It includes the security context that specifies a non-root container configuration with my custom user’s UID / GUID created previously (runAsUser and runAsGroup parameters):

$ cat ReplicaSet.yaml

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: mssql-deployment-2

spec:

replicas: 1

template:

metadata:

labels:

app: mssql-2

spec:

securityContext:

runAsUser: 10001

runAsGroup: 10001

terminationGracePeriodSeconds: 10

containers:

- name: mssql-2

image: trow.kube-public:31000/2019-latest-non-root

ports:

- containerPort: 1433

env:

- name: MSSQL_PID

value: "Developer"

- name: ACCEPT_EULA

value: "Y"

- name: MSSQL_SA_PASSWORD

valueFrom:

secretKeyRef:

name: sql-secrets

key: sapassword

volumeMounts:

- name: mssqldb

mountPath: /var/opt/mssql

volumes:

- name: mssqldb

persistentVolumeClaim:

claimName: mssql-data-2

Obviously, if you don’t meet the correct security permissions on the underlying persistent volume, you will get an error when provisioning the MSSQL pod because the sqlservr process will not get the privileges to create or to access the SQL Server related files as shown below:

$ kubectl get pod NAME READY STATUS RESTARTS AGE mssql-deployment-2-8b4d7f7b7-x4x8w 0/1 Error 2 30s $ kubectl logs mssql-deployment-2-8b4d7f7b7-x4x8w SQL Server 2019 will run as non-root by default. This container is running as user mssql. To learn more visit https://go.microsoft.com/fwlink/?linkid=2099216. /opt/mssql/bin/sqlservr: Error: Directory [/var/opt/mssql/system/] could not be created. Errno [13]

If well configured, everything should work as expected and your container should run and interacts correctly with the corresponding persistent volume in the security context defined in your YAML specification:

All this stuff applies to SQL Server 2017.

See you!

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2024/03/AHI_web.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2025/11/LTO_WEB.jpg)