In this blog post, I will show you what I did to troubleshoot an interesting problem with Memory on SQL Server.

It all started with a job performing DBCC CHECKDB on all databases taking hours to complete instead of 10 minutes.

So the Job ran outside of its maintenance window, still running in the morning when users come back to the office. They immediately complained about poor application performance.

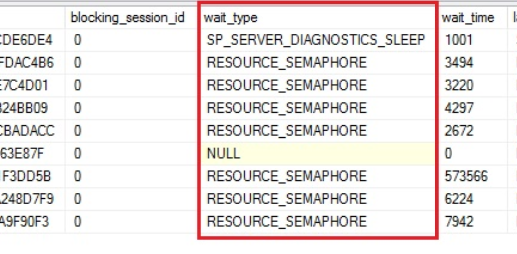

While running the CHECKDB we could see many sessions in a SUSPENDED state with SELECT queries waiting on “RESOURCE_SEMAPHORE”.

The instance seemed to be starving on Memory.

The instance seemed to be starving on Memory.

We tried to increase the “Max Server Memory” by 2GB. This solved the issue. Temporarily.

Around a week later the same issue occurred again with users complaining of very bad performance. The CHECKDB Job was running again for hours.

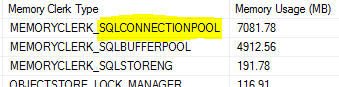

I did more analysis of the memory usage for this instance. Identifying the biggest memory consumers is key to proceeding with investigation.

The amount of memory allocated to each memory clerk can be found using the sys.dm_os_memory_clerks DMV.

We can notice a very high value for the Memory clerk “SQLCONNECTIONPOOL”.

The “Max Server Memory” value of this instance is configured to 14GB. So half of it is allocated to the SQLCONNECTIONPOOL Memory Clerk. This is obviously not a normal situation.

We decided to perform a Failover of the Availability Group to clear all the memory on the instance to perform the CHECKDB.

From there I created a Job monitoring the Memory Clerk usage. The query is from one of Glenn Berry’s diagnostic queries.

Here is the SQL.

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

-- The monitoring Tableuse dbi_toolsgocreate table monitoring.memoryClerkType ( mct_id int identity not null primary key , mct_logdate datetime2 default getdate() , mct_MemoryClerkType nvarchar(256) not null , mct_memoryUsageMB DECIMAL(15,2));-- The query inside an SQL Server Agent Jobinsert into dbi_tools.monitoring.memoryClerkType(mct_MemoryClerkType, mct_memoryUsageMB) SELECT TOP(10) mc.[type] AS [Memory Clerk Type], CAST((SUM(mc.pages_kb)/1024.0) AS DECIMAL (15,2)) AS [Memory Usage (MB)] FROM sys.dm_os_memory_clerks AS mc WITH (NOLOCK) GROUP BY mc.[type] ORDER BY SUM(mc.pages_kb) DESC OPTION (RECOMPILE); |

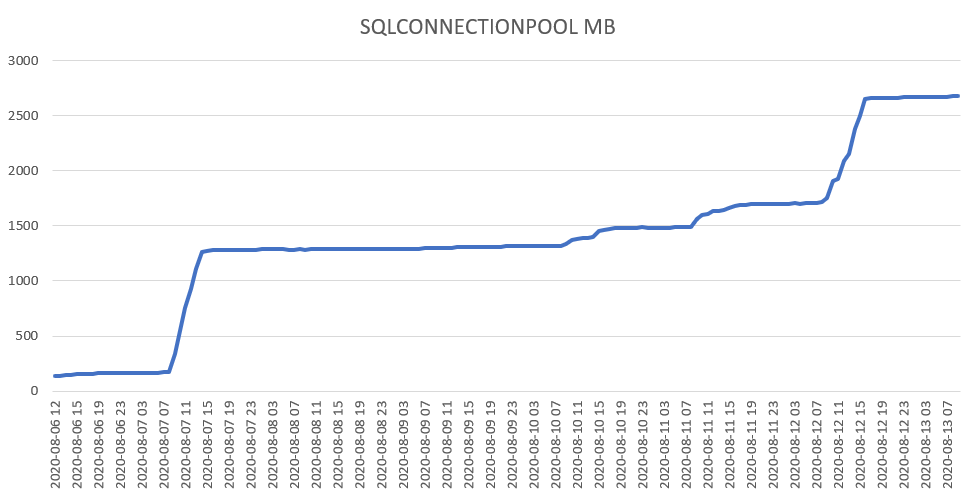

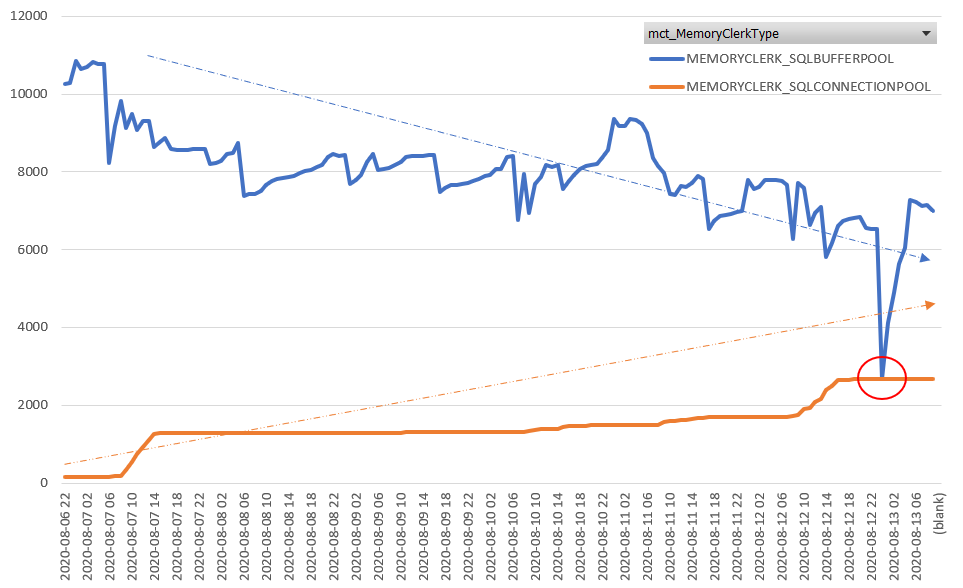

This allowed me to confirm that the Memory allocated to SQLCONNECTIONPOOL is increasing over time.

This causes internal Memory pressure on the instance. As more and more memory is allocated to this Memory Clerk the memory available for the Buffer Pool slowly decreases. Any event that requires a lot of memory like a DBCC CHECKDB would flush memory from the Buffer Pool but the memory allocated to SQLCONNECTIONPOOL is be flushed. This is a real issue.

This issue is described in an SQL Server CAT article: Watch out those prepared SQL statements

Basically, some application server is calling sp_prepare system procedure through an ODBC driver and do not call sp_unprepare.

This seems to be a bug in the ODBC driver. There’s not much to do on the MSSQL server.

My instance has dozens of databases for different applications. I need to identify which database, and so which application server needs an ODBC driver update.

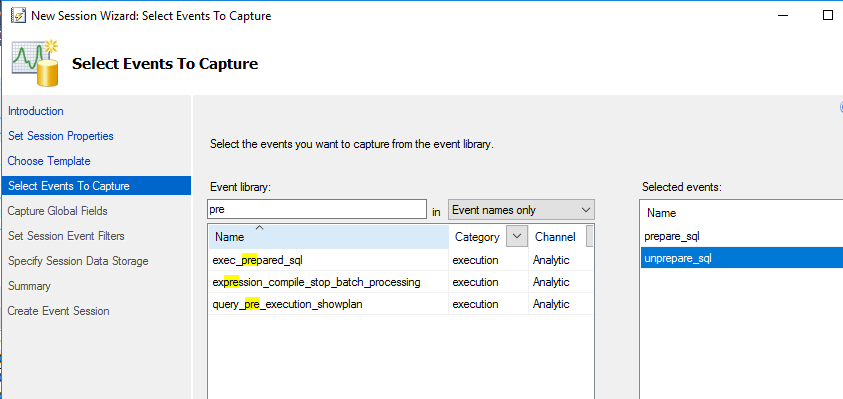

To do so I created an Extended Event session. There are events for sp_prepare and sp_unprepare in the “execution” category.

Here is the T-SQL for this XE session:

|

1

2

3

4

5

6

7

8

9

10

|

CREATE EVENT SESSION [dbi_sna_memory] ON SERVER ADD EVENT sqlserver.prepare_sql( ACTION(sqlserver.client_app_name,sqlserver.client_hostname ,sqlserver.database_name,sqlserver.session_id,sqlserver.username)),ADD EVENT sqlserver.unprepare_sql( ACTION(sqlserver.client_app_name,sqlserver.client_hostname ,sqlserver.database_name,sqlserver.session_id,sqlserver.username))ADD TARGET package0.event_file(SET filename=N'dbi_sna_memory',max_file_size=(500))WITH (STARTUP_STATE=ON)GO |

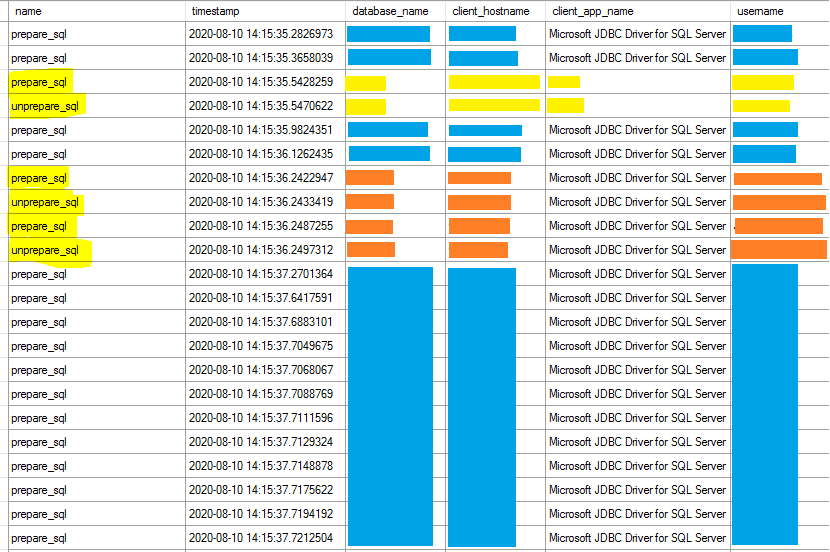

Looking at the live data I could see a lot of sp_prepare without any sp_unprepare for one of the databases.

Sessions to the database in blue color doesn’t seem to call the sp_unprepare system procedure.

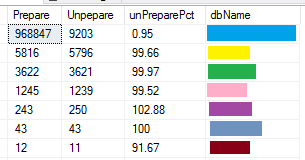

Looking at the Extended Event data with SQL I get a better view of the situation.

Based on a sample of approximately 1 Million events, it’s obvious that the first database is the one doing the most sp_prepare events. There are only 1% of sp_unprepare calls which is clearly abnormal compared to the other databases with an expected value of 99%-100%.

Just for information here is the query I did to get the result above:

|

1

2

3

4

5

6

7

8

|

select SUM(IIF(eventName='prepare_sql', 1, 0)) AS Prepare , SUM(IIF(eventName='unprepare_sql', 1, 0)) AS Unpepare , ROUND(CAST(SUM(IIF(eventName='unprepare_sql', 1, 0)) AS FLOAT)/SUM(IIF(eventName='prepare_sql', 1, 0))*100, 2) AS unPreparePct , dbNamefrom dbi_tools.dbo.xe_Memory_data -- Table with data from the XE session filegroup by dbNameorder by 1 desc |

The culprit is now identified. We can update the client layer on the application server and look for improvement in memory usage.

Written by Steven Naudet

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2024/03/AHI_web.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2025/11/LTO_WEB.jpg)