Docker swarm mode provides an easy way to publish ports for services. Indeed, in this mode all nodes participate in an ingress routing mesh and accept connections to any service port published. Thus, all incoming requests are routed to available nodes hosting a service.

That’s a pretty cool feature but it has some drawbacks. Let’s say that a node goes wrong … In this case the application must retry connecting to the next available node and it will likely imply changing the application connection string or using a DNS redirection. A load balancer outside of the Swarm provides a better way to connect to the containers without having to worry about the cluster nodes from an application perspective. Thanks to the ingress routing all of the published services are available through any of the swarm nodes and the load balancer can be set to use the swarm private IP addresses without a concern of which node is hosting what service.

After taking a look at the existing open source projects / solutions in the market, I quickly reduced the scope of my search to only 2 ones: nginx and HAProxy. Each product has pros and cons but I choose HAProxy that fit well with my tests. In fact, I used more specifically the dockercloud-haproxy because it includes an HAProxy docker image that runs with Docker Swarm mode including docker-compose. I’m not sure this project is dead or alive referring to the dockerhub page that states the following: Unfortunately, this project is currently put into maintenance mode. Please do not send any PR for new features. We will still fix bugs if there is any. It exists some other alternatives as proxy.dockerflow but once again the dockercloud-haproxy project remained relevant for what I wanted to test.

Here my docker-compose file:

version: '3.1'

services:

db:

build: .

image: 127.0.0.1:5000/dbi_linux_sql2017:CU4

ports:

- "1433:1433"

volumes:

- /u00/db2:/u00

- /u01/db2:/u01

- /u02/db2:/u02

- /u03/db2:/u03

- /u98/db2:/u98

environment:

- MSSQL_SA_PASSWORD_FILE=/run/secrets/mssql_sa_password

- ACCEPT_EULA=Y

- MSSQL_PID=Developer

- MSSQL_USER=dbi

- MSSQL_USER_PASSWORD_FILE=/run/secrets/mssql_user_password

- TZ=Europe/Berlin

- SERVICE_PORTS=1433

networks:

- back-tier

deploy:

replicas: 1

placement:

constraints: [node.role != manager]

resources:

limits:

cpus: '1'

memory: 3584M

reservations:

cpus: '1'

memory: 3072M

secrets:

- source: mssql_sa_password

target: mssql_sa_password

- source: mssql_user_password

target: mssql_user_password

proxy:

image: dockercloud/haproxy

depends_on:

- db

environment:

- BALANCE=leastconn

- ADDITIONAL_SERVICES=project_dir:db

- MODE=tcp

volumes:

- /var/run/docker.sock:/var/run/docker.sock

ports:

- 80:80

- 1936:1936

networks:

- back-tier

deploy:

placement:

constraints: [node.role == manager]

networks:

back-tier:

external:

name: backend-server

front-tier:

external:

name: frontend-server

secrets: # top level secrets block

mssql_sa_password:

external: true

mssql_user_password:

external: true

It includes 2 services:

- db (my SQL Server container)

- proxy (my HAProxy server)

I used some Swarm Mode specific environment variables:

- SERVICE_PORTS=1433 – to expose the port of my db service (mssql default port)

- BALANCE=leastconn – because it is recommended with long sessions as LDAP, SQL, TSE etc…

- ADDITIONAL_SERVICES=project_dir:db – to identify the db service from the proxy service

- MODE=tcp – mode of load balancing for HAProxy (TCP in my case)

I deployed the stack as following:

$ docker stack deploy -c docker-compose-test.yml mssql Ignoring unsupported options: build Creating service mssql_db Creating service mssql_proxy

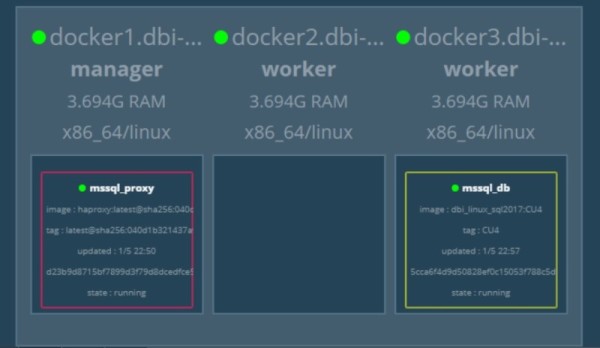

My service state was as follows:

$ docker service ls --filter name=mssql ID NAME MODE REPLICAS IMAGE PORTS 26zdisl9r64y mssql_db replicated 1/1 127.0.0.1:5000/dbi_linux_sql2017:CU4 *:1433->1433/tcp nhje3081gwr8 mssql_proxy replicated 1/1 dockercloud/haproxy:latest *:80->80/tcp,*:1936->1936/tcp

Concerning the mssql_proxy service we may notice the exposed ports 80 and 1936. The former will be used to connect to the HAProxy for the SQL Server connection redirection and the latter concers the default port to get HAProxy stats information (from a web browser).

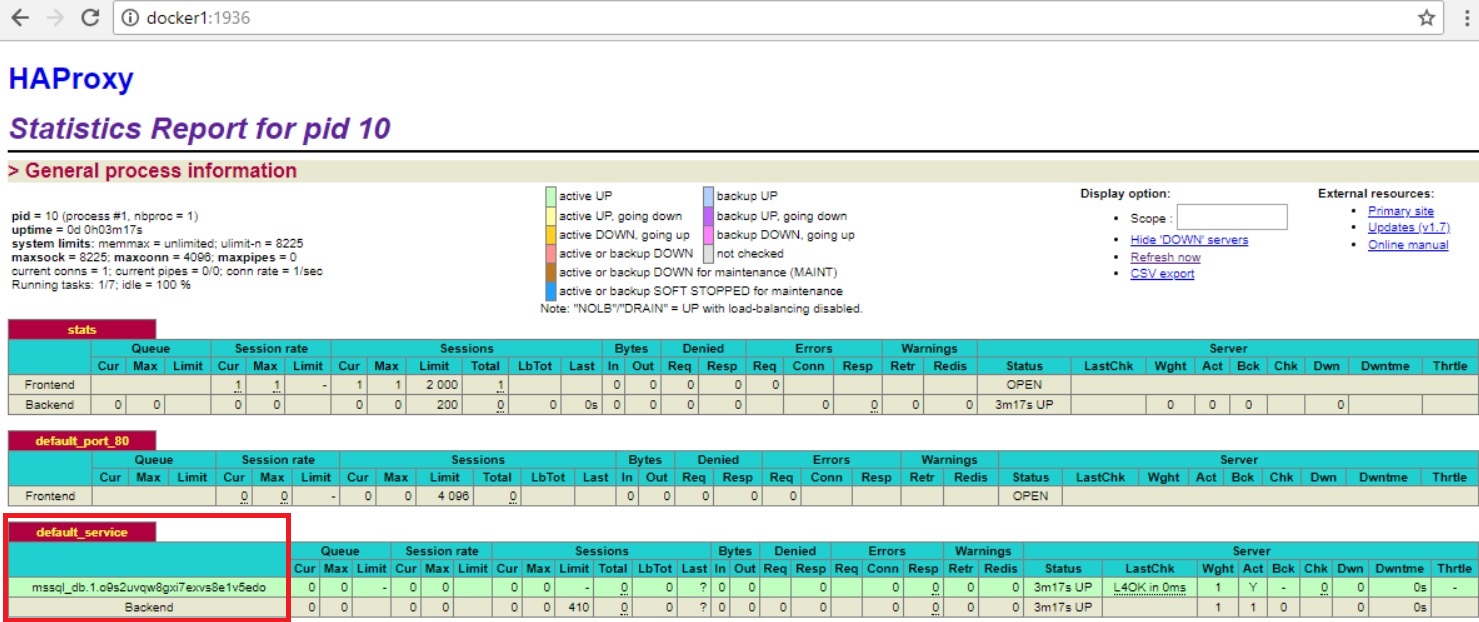

Here an example of my HAProxy output:

The db service task (mssql_db.1.o9s2xxxxx) – that corresponds in fact to my service task (or container) is well-identified by the HA proxy.

So, I tried to connect to my SQL Server DB container through the HAProxy:

C:\Users\clustadmin>sqlcmd -S docker1,80 -Usa -PPassw0rd1 -Q"SELECT @@SERVERNAME" --------------------------------------------------------------------------------- cf7f9d6036f3

It worked like a charm!

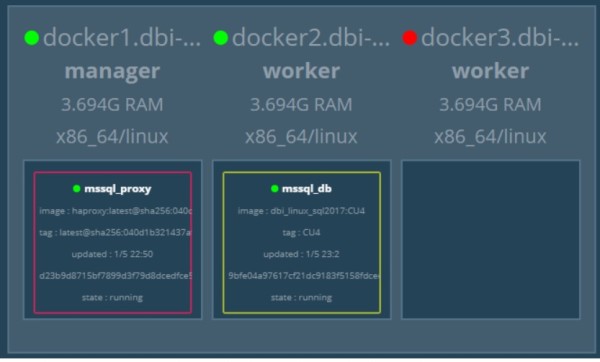

The next step consisted in simulating a failure of my docker node DOCKER3 …

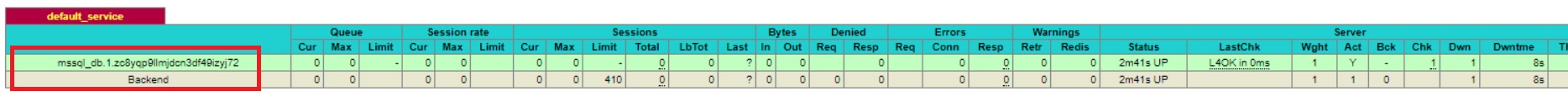

… and try again a connection to the SQL Server DB container that has restarted on DOCKER2 node. As expected, the connection kept working and was automatically redirected by the HAProxy to the next available node. Obviously in this case the redirection was not transparent for the application. We have to take to into account the short period of outage that corresponds to the mssql_db container restart duration. By the way, if you take a look at the HAProxy default_service section you may notice that the task id has changed (mssql_db.1.zo8yqxxxxxx) meaning the system has created a new task (or container). However, my mssql_db container host name remained the same in my case.

C:\Users\clustadmin>sqlcmd -S docker1,80 -Usa -PPassw0rd1 -Q"SELECT @@SERVERNAME" --------------------------------------------------------------------------------- cf7f9d6036f3

Another interesting point I had to dig further concerns the HAProxy health check by itself. In my case I used TCP-based health check to ensure the service is alive but it is probably not good enough to check if my SQL Server instance (in other words my application) is working correctly. I spent some times to read articles and documentations and I discovered we may use custom health checks with HAProxy with databases as MySQL, PostgreSQL and Redis. Unfortunately, at the time I’m writing this blog post nothing concerning SQL Server probably because it is pretty new in this area (maybe I get wrong … so please feel free to comment) . I found out some other alternatives as xinetd that may be used to trigger a custom routine (by using sqlcmd command line tool for example) but in my context it makes the game a little bit more complex because I have to include it to my SQL Server docker image. After thinking a little bit about this issue, I realized we already implemented such routine directly inside the SQL Server image itself (HEALTHCHECK section) that uses precisely the sqlcmd command line tool to check the SQL Server connection is working correctly.

/opt/mssql-tools/bin/sqlcmd -S localhost,$MSSQL_TCP_PORT -U sa -P ${SA_PASSWORD} -Q "select 1"

By the way, the task status can be viewed through the docker ps command as follows:

$docker ps -f name=mssql --format 'table {{.ID}}\t{{.Names}}\t{{.CreatedAt}}\t{{.Status}}'

CONTAINER ID NAMES CREATED AT STATUS

9bfe04a97617 mssql_db.1.zc8yqp9llmjdcn3df49izyj72 2018-05-01 23:01:39 +0200 CEST Up 9 hours (healthy)

So, the combination of the docker health check routine and the HAProxy TCP health check seems to be a good solution to address different kind of failure including docker swarm node failures and application failures as well.

This is just the first step about using an HAProxy with SQL Server (redirection capabilities). You may also extend this first scenario with a low cost solution including multiples SQL Server Express containers to address scale-out needs with Reporting queries for instance. I remember Christophe Laporte(b) gave a session about some years ago. I will try to blog about soon!

See you!

By David Barbarin

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/microsoft-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2024/03/AHI_web.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)