This is the start of a series of posts I wanted to write for a long time: Getting started with Red Hat Satellite. Just in case you don’t know what it is, this statement from the official Red Hat website summarizes it quite well: “As your Red Hat® environment continues to grow, so does the need to manage it to a high standard of quality. Red Hat Satellite is an infrastructure management product specifically designed to keep Red Hat Enterprise Linux® environments and other Red Hat infrastructure running efficiently, properly secured, and compliant with various standards.”. In this first post it is all about the installation of Satellite and that is surprisingly easy. Lets go.

What you need as a starting point is a redhat Enterprise Linux minimal installation, either version 6 or 7. In my case it is the latest 7 release as of today:

[root@satellite ~]$ cat /etc/redhat-release Red Hat Enterprise Linux Server release 7.5 (Maipo)

Of course the system should be fully registered so you will be able to install updates / fixes and additional packages (and that requires a redhat subscription):

[root@satellite ~]$ subscription-manager list

+-------------------------------------------+

Installed Product Status

+-------------------------------------------+

Product Name: Red Hat Enterprise Linux Server

Product ID: 69

Version: 7.5

Arch: x86_64

Status: Subscribed

Status Details:

Starts: 11/20/2017

Ends: 09/17/2019

As time management is critical that should be up and running before proceeding. For redhat Enterprise Linux chrony is the tool to go for:

[root@satellite ~]$ yum install -y chrony [root@satellite ~]$ systemctl enable chronyd [root@satellite ~]$ systemctl start chronyd

Satellite requires a fully qualified hostname so lets add that to the hosts file (of course you would do that with DNS in a real environment):

[root@satellite mnt]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.22.11 satellite.it.dbi-services.com satellite

As a Satellite server only makes sense when clients can connect to it a few ports need to be opened (not going into the details here, that will be the topic of another post):

[root@satellite ~]$ firewall-cmd --permanent \

--add-port="53/udp" --add-port="53/tcp" \

--add-port="67/udp" --add-port="69/udp" \

--add-port="80/tcp" --add-port="443/tcp" \

--add-port="5000/tcp" --add-port="5647/tcp" \

--add-port="8000/tcp" --add-port="8140/tcp" \

--add-port="9090/tcp"

That’s basically all you need to do as preparation. There are several methods to install Satellite, I will use the downloaded iso as the source (what is called the “Disconnected Installation” what you will usually need in enterprise environments):

[root@satellite ~]$ ls -la /var/tmp/satellite-6.3.3-rhel-7-x86_64-dvd.iso -rw-r--r--. 1 root root 3041613824 Oct 11 18:16 /var/tmp/satellite-6.3.3-rhel-7-x86_64-dvd.iso

First of all the required packages need to be installed so we need to mount the iso:

[root@satellite ~]$ mount -o ro,loop /var/tmp/satellite-6.3.3-rhel-7-x86_64-dvd.iso /mnt [root@satellite ~]$ cd /mnt/ [root@satellite mnt]# ls addons extra_files.json install_packages media.repo Packages repodata RHSCL TRANS.TBL

Installing the packages required for Satellite is just a matter of calling the “install_packages” script:

[root@satellite mnt]$ ./install_packages This script will install the satellite packages on the current machine. - Ensuring we are in an expected directory. - Copying installation files. - Creating a Repository File - Creating RHSCL Repository File - Checking to see if Satellite is already installed. - Importing the gpg key. - Installation repository will remain configured for future package installs. - Installation media can now be safely unmounted. Install is complete. Please run satellite-installer --scenario satellite

The output already tells us what to do next, executing the “satellite-installer” script (I will go with the defaults here but there are many options you could specify already here):

[root@satellite mnt]$ satellite-installer --scenario satellite This system has less than 8 GB of total memory. Please have at least 8 GB of total ram free before running the installer.

Hm, I am running that locally in a VM so lets try to increase that at least for the time of the installation and try again:

[root@satellite ~]$ satellite-installer --scenario satellite Installing Package[grub2-efi-x64] [0%] [ ]

… and here we go. Some minutes later the configuration/installation is completed:

[root@satellite ~]$ satellite-installer --scenario satellite

Installing Done [100%] [.......................................]

Success!

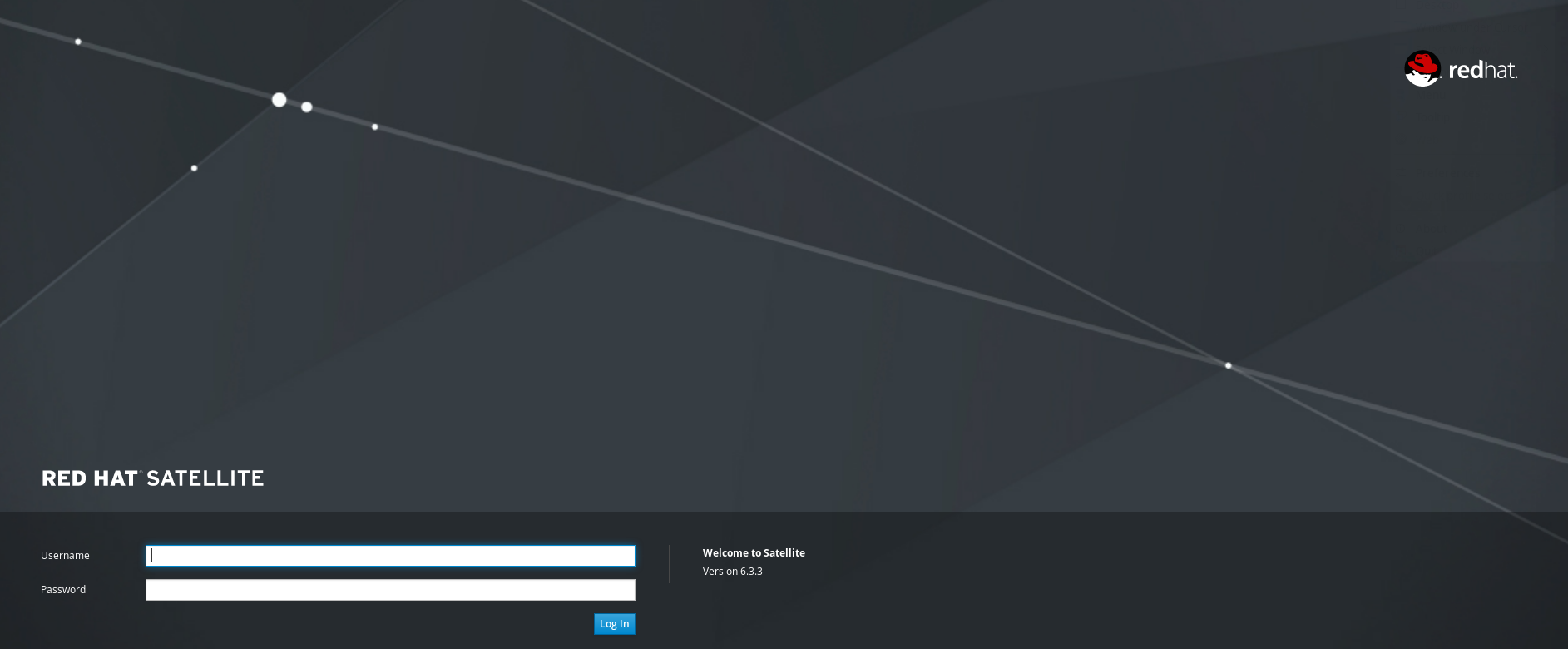

* Satellite is running at https://satellite.it.dbi-services.com

Initial credentials are admin / L79AAUCMJWf6Y4HL

* To install an additional Capsule on separate machine continue by running:

capsule-certs-generate --foreman-proxy-fqdn "$CAPSULE" --certs-tar "/root/$CAPSULE-certs.tar"

* To upgrade an existing 6.2 Capsule to 6.3:

Please see official documentation for steps and parameters to use when upgrading a 6.2 Capsule to 6.3.

The full log is at /var/log/foreman-installer/satellite.log

Before we go into some details on how to initially configure the system in the next post lets check what we have running. A very good choice (at least when you ask me 🙂 ) is to use PostgreSQL as the repository database:

[root@satellite ~]$ ps -ef | grep postgres postgres 1264 1 0 08:56 ? 00:00:00 /usr/bin/postgres -D /var/lib/pgsql/data -p 5432 postgres 1381 1264 0 08:56 ? 00:00:00 postgres: logger process postgres 2111 1264 0 08:57 ? 00:00:00 postgres: checkpointer process postgres 2112 1264 0 08:57 ? 00:00:00 postgres: writer process postgres 2113 1264 0 08:57 ? 00:00:00 postgres: wal writer process postgres 2114 1264 0 08:57 ? 00:00:00 postgres: autovacuum launcher process postgres 2115 1264 0 08:57 ? 00:00:00 postgres: stats collector process postgres 2188 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36952) idle postgres 2189 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36954) idle postgres 2193 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36958) idle postgres 2194 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36960) idle postgres 2218 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36964) idle postgres 2474 1264 0 08:58 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2541 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36994) idle postgres 2542 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36996) idle postgres 2543 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36998) idle postgres 2609 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2618 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2627 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2630 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2631 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2632 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2634 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2660 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2667 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2668 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2672 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2677 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2684 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2685 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2689 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle root 2742 2303 0 08:59 pts/0 00:00:00 grep --color=auto postgres

Lets quickly check if that is a supported version of PostgreSQL:

[root@satellite ~]$ cat /var/lib/pgsql/data/PG_VERSION

9.2

[root@satellite ~]$ su - postgres

-bash-4.2$ psql

psql (9.2.24)

Type "help" for help.

postgres=# select version();

version

---------------------------------------------------------------------------------------------------------------

PostgreSQL 9.2.24 on x86_64-redhat-linux-gnu, compiled by gcc (GCC) 4.8.5 20150623 (Red Hat 4.8.5-28), 64-bit

(1 row)

Hm, 9.2 is already out of support. Nothing we would recommend to our customers but as long as redhat itself is supporting that it is probably fine. Just do not expect to get any fixes for that release from PostgreSQL community. Going a bit further into the details the PostgreSQL instance contains two additional users:

postgres=# \du

List of roles

Role name | Attributes | Member of

-----------+------------------------------------------------+-----------

candlepin | | {}

foreman | | {}

postgres | Superuser, Create role, Create DB, Replication | {}

That corresponds to the connections to the instance we can see in the process list:

-bash-4.2$ ps -ef | egrep "foreman|candlepin" | grep postgres postgres 2541 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36994) idle postgres 2542 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36996) idle postgres 2543 1264 0 08:58 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(36998) idle postgres 2609 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2618 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2627 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2630 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2631 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2632 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2634 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2677 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2684 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2685 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 2689 1264 0 08:59 ? 00:00:00 postgres: foreman foreman [local] idle postgres 3143 1264 0 09:03 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(37114) idle postgres 3144 1264 0 09:03 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(37116) idle postgres 3145 1264 0 09:03 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(37118) idle postgres 3146 1264 0 09:03 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(37120) idle postgres 3147 1264 0 09:03 ? 00:00:00 postgres: candlepin candlepin 127.0.0.1(37122) idle

Foreman is responsible for the life cycle management and candlepin is responsible for the subscription management. Both are fully open source and can also be used on their own. What else do we have:

[root@satellite ~]$ ps -ef | grep -i mongo mongodb 1401 1 0 08:56 ? 00:00:08 /usr/bin/mongod --quiet -f /etc/mongod.conf run root 3736 2303 0 09:11 pts/0 00:00:00 grep --color=auto -i mongo

In addition to the PostgreSQL instance there is also a MongoDB process running. What is it for? It is used by Katello which is a Foreman plugin that brings “the full power of content management alongside the provisioning and configuration capabilities of Foreman”.

The next component is Pulp:

[root@satellite ~]# ps -ef | grep pulp apache 1067 1 0 08:56 ? 00:00:03 /usr/bin/python /usr/bin/celery beat --app=pulp.server.async.celery_instance.celery --scheduler=pulp.server.async.scheduler.Scheduler apache 1076 1 0 08:56 ? 00:00:02 /usr/bin/python /usr/bin/pulp_streamer --nodaemon --syslog --prefix=pulp_streamer --pidfile= --python /usr/share/pulp/wsgi/streamer.tac apache 1085 1 0 08:56 ? 00:00:11 /usr/bin/python /usr/bin/celery worker -A pulp.server.async.app -n resource_manager@%h -Q resource_manager -c 1 --events --umask 18 --pidfile=/var/run/pulp/resource_manager.pid apache 1259 1 0 08:56 ? 00:00:12 /usr/bin/python /usr/bin/celery worker -n reserved_resource_worker-0@%h -A pulp.server.async.app -c 1 --events --umask 18 --pidfile=/var/run/pulp/reserved_resource_worker-0.pid --maxtasksperchild=2 apache 1684 1042 0 08:56 ? 00:00:04 (wsgi:pulp) -DFOREGROUND apache 1685 1042 0 08:56 ? 00:00:04 (wsgi:pulp) -DFOREGROUND apache 1686 1042 0 08:56 ? 00:00:04 (wsgi:pulp) -DFOREGROUND apache 1687 1042 0 08:56 ? 00:00:00 (wsgi:pulp-cont -DFOREGROUND apache 1688 1042 0 08:56 ? 00:00:00 (wsgi:pulp-cont -DFOREGROUND apache 1689 1042 0 08:56 ? 00:00:00 (wsgi:pulp-cont -DFOREGROUND apache 1690 1042 0 08:56 ? 00:00:01 (wsgi:pulp_forg -DFOREGROUND apache 2002 1085 0 08:57 ? 00:00:00 /usr/bin/python /usr/bin/celery worker -A pulp.server.async.app -n resource_manager@%h -Q resource_manager -c 1 --events --umask 18 --pidfile=/var/run/pulp/resource_manager.pid apache 17757 1259 0 09:27 ? 00:00:00 /usr/bin/python /usr/bin/celery worker -n reserved_resource_worker-0@%h -A pulp.server.async.app -c 1 --events --umask 18 --pidfile=/var/run/pulp/reserved_resource_worker-0.pid --maxtasksperchild=2 root 18147 2303 0 09:29 pts/0 00:00:00 grep --color=auto pulp

This one is responsible “for managing repositories of software packages and making it available to a large numbers of consumers”. So far for the main components. We will have a more in depth look into these in one of the next posts.

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2023/01/APY_web-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2024/03/AHI_web.jpg)