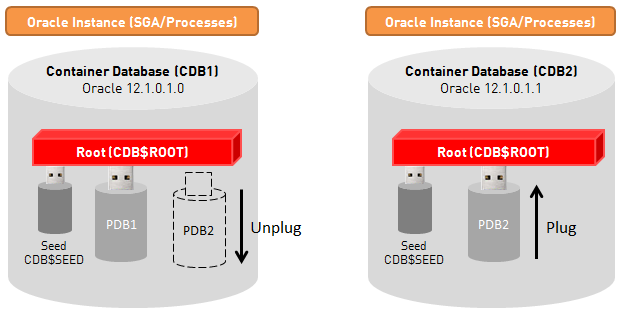

The concept of Multitenant databases, which was introduced with Oracle 12c in June 2013, allows to run several databases on a single instance. Oracle presents this feature as a good solution for Oracle patching. The reason behind it is that it is now possible to unplug a container database (called PDB) from its original container (called CDB), in order to plug it into a new local or remote Container with a higher level of PSU. In this post, I will show how you can install the new PSU 12.1.0.1.1 for Oracle 12c (released in October 2013) using Multitenant databases to keep the downtime as low as possible.

The following schema shows the concept:

We assume that a company is using a Container database CDB1 with two pluggable databases PDB1 and PDB2 running on it:

SQL> select name, open_mode from v$pdbs; NAME OPEN_MODE ------------------------------ ---------- PDB$SEED READ ONLY PDB1 READ WRITE PDB2 READ WRITE

These two databases are accessed by users and applications and a window maintenance is planned in order to install the latest PSU for Oracle 12c.

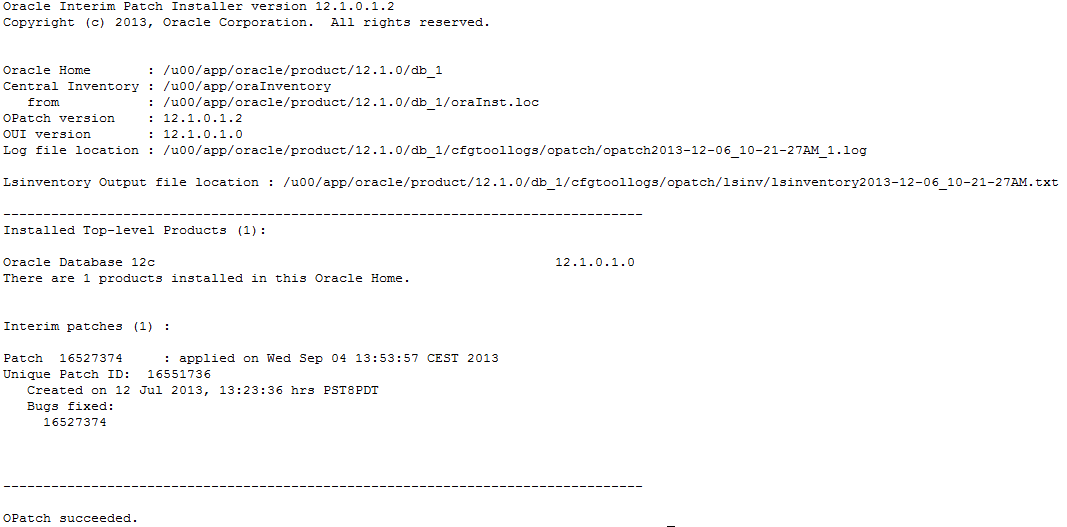

Using the OPatch utility, we can see the currently installed patches:

$ opatch lsinventory

We can see that the patch 16527374 is already installed. It has been installed in order to fix a bug with Enterprise Manager Express 12c and Multitenant databases. Also note the presence of the latest OPatch utility 12.1.0.1.2.

To unplug PDB2 from the container CDB1 and plug it into a new CDB with a higher PSU level, we need to install a second rdbms software with the same patching level (12.1.0.1.0 + EM Express bug fix), on which the new PSU will be installed.

An empty container database CDB2 has been created on the second environment, with the Oracle Home /u00/app/oracle/product/12.1.0/db_2. Now it is time to install the new PSU 12.1.0.1.1 on the second environment. The listener for this installation is named LISTENER_DB_2:

Step 1: Upgrade OPatch utility to the latest version (see note 6880880) if not already done.

Step 2: Download and unzip the PSU 12.1.0.1.1 on the server (patch 17027533).

$ unzip p17027533_121010_Linux-x86-64.zip -d /tmp

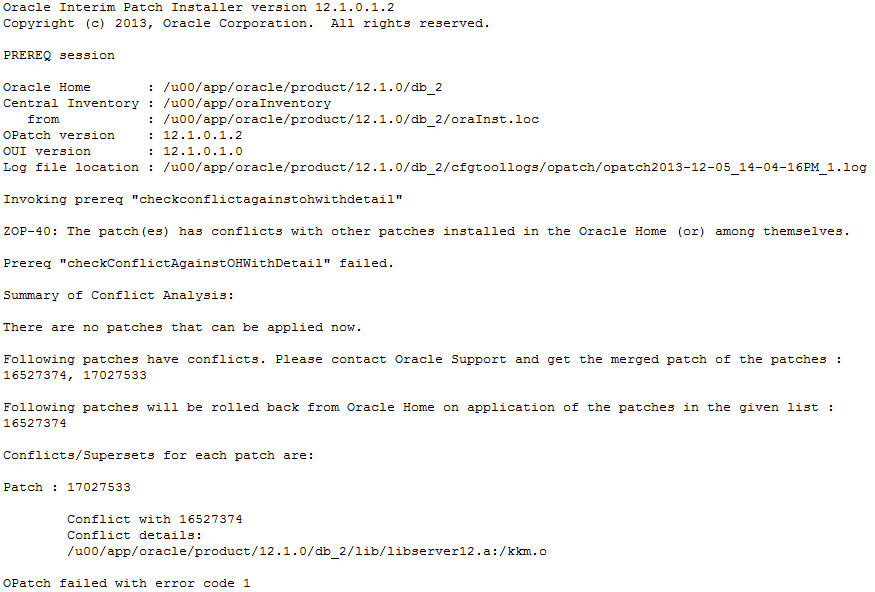

Step 3: Check conflicts between the PSU and already installed patches.

$ opatch prereq CheckConflictAgainstOHWithDetail -ph ./

The patch already installed to fix the EM Express bug is in conflict with the new PSU. Oracle will remove the existing patch before installing the new PSU. This is not an issue here, since Oracle provides the patch 16527374 specific to Oracle 12.1.0.1.1. It will be possible to reinstall this patch once the PSU is applied.

Step 4: Shut down all databases and listeners running on the Oracle Home of the second environment.

Shut down CDB2:

SQL> shutdown immediate;

I did not create any listener for my second environment. If you plan to drop the current ORACLE_HOME after upgrade, you will have to create a new listener in the new ORACLE_HOME and to shut it down before upgrading.

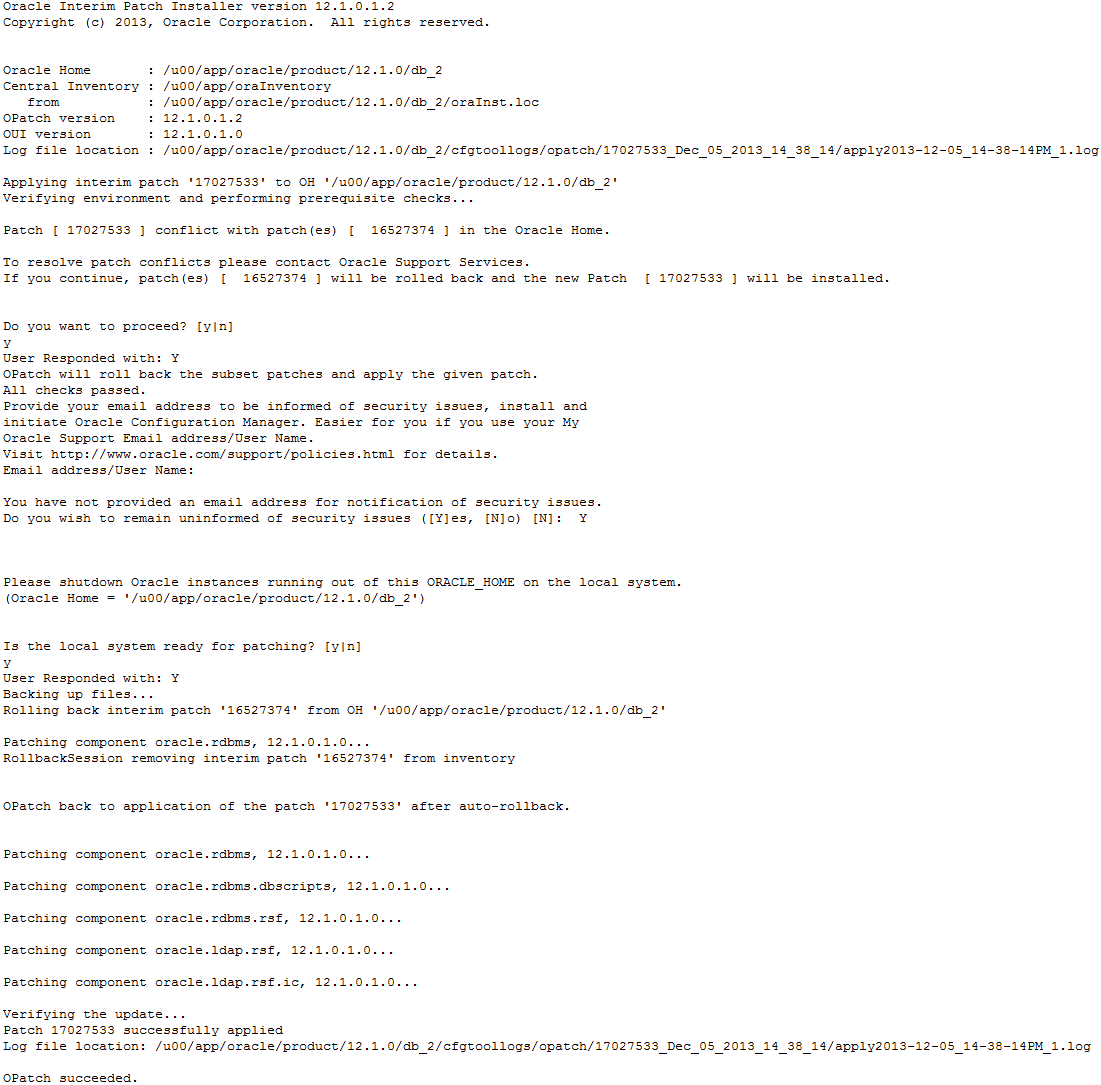

Step 5: Install the new PSU.

$ cd /tmp/17027533

$ opatch apply

Note that the patch for EM Express bug fix has to be reinstalled for 12.1.0.1.1 release, since it has been removed during the PSU install. This bug is not covered by the PSU.

Step 6: Restart databases and listeners.

Restart the CDB2 database:

SQL> startup;

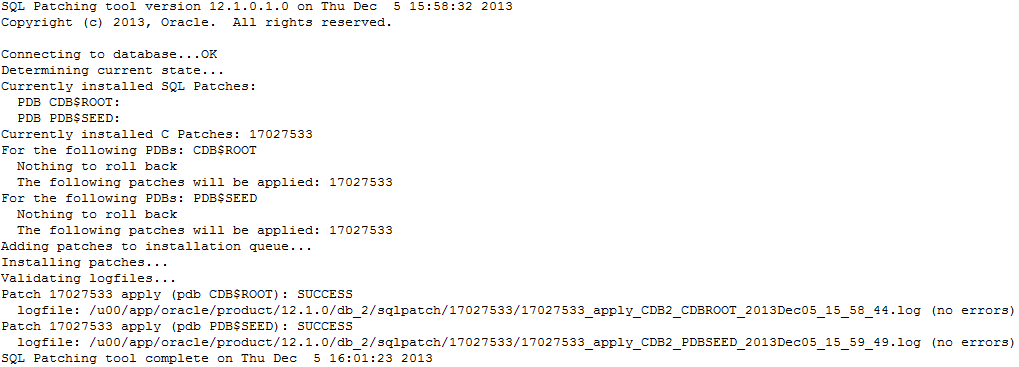

Step 7: Load modified SQL files into the database with Datapatch tool.

$ cd $ORACLE_HOME/OPatch$ ./datapatch -verbose

It is possible that the following error occurs:

DBD::Oracle::st execute failed: ORA-20001: Latest xml inventory is not loaded into table

In this case, the parameter _disable_directory_link_check must be set to TRUE (see Oracle note 1602089.1) and the database must be restarted:

alter system set “_disable_directory_link_check”=TRUE scope=spfile;

We have now CDB1 running in 12.1.0.1.0 PSU level, and CDB2 running in 12.1.0.1.1 PSU level. Until now, no downtime occured on PDB1 and PDB2 databases. All configuration and installation steps for the PSU have been performed on a non-productive environment.

The next steps consist in unplugging PDB2 from CDB1, in order to plug it into CDB2.

Step 8: Stop the user application and shutdown PDB2 from CDB1.

From this step on, the pluggable database must be closed. The downtime will start now.

SQL> connect sys/manager@CDB1 as sysdba Connected. SQL> ALTER SESSION SET CONTAINER = PDB2; Session altered. SQL> ALTER PLUGGABLE DATABASE CLOSE; Pluggable database altered.

Step 9: Unplug PDB2 from CDB1.

SQL> ALTER SESSION SET CONTAINER = CDB$ROOT; Session altered. SQL> ALTER PLUGGABLE DATABASE PDB2 UNPLUG INTO '/u01/oradata/CDB1/PDB2/PDB2.xml'; Pluggable database altered.

Step 10: Plug PDB2 into CDB2.

SQL> connect sys/manager@CDB2 as sysdba

Connected.

SQL> CREATE PLUGGABLE DATABASE PDB2

USING '/u01/oradata/CDB1/PDB2/PDB2.xml'

MOVE FILE_NAME_CONVERT = ('/u01/oradata/CDB1/PDB2','/u01/oradata/CDB2/PDB2');

Pluggable database created.

The use of the MOVE clause makes the new pluggable database creation very quick, since the database files are not copied but only moved on the file system. This operation is immediate if using the same file system.

SQL> ALTER SESSION SET CONTAINER = PDB2; Session altered. SQL> ALTER PLUGGABLE DATABASE OPEN; Pluggable database altered.

The database PDB2 is now opened, and users can access to the database. Note that if installing CDB2 on a different host, users may have to update the TNS connect string.

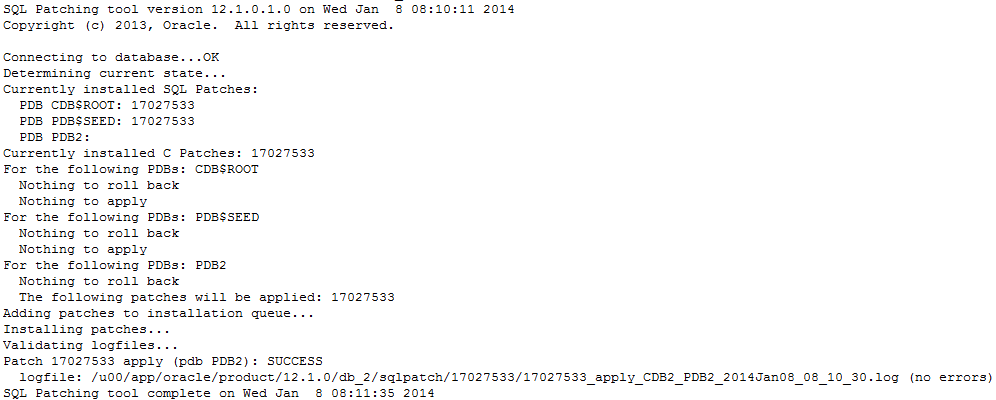

Step 11: Load modified SQL files into the database with Datapatch tool.

Since the rdbms has been upgraded to 12.1.0.1.1 before any pluggable database has been plugged into CDB2, all newly plugged databases must execute the “datapatch” script in order to load the modified SQL files.

Run the following command once the CDB2 environment is set:

$ cd $ORACLE_HOME/OPatch $ ./datapatch -verbose

Important: If you are using static listener registration, do not forget to change your listener.ora in order to provide the true ORACLE_HOME path corresponding to the new environment.

The pluggable database is now fully ready and downtime only occured between steps 8 and 10. By using the MOVE clause, the only downtime corresponds to the time required for shutting down the database from the source CDB and starting the database on the destination CDB. It represents a few seconds… And if both CDB are running on the same host, the users will not have to update their TNS connect string in order to access the database.

Patching PDBs using this method might ease the DBA life in the future 🙂

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2022/12/oracle-square.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2022/08/DWE_web-min-scaled.jpg)