This is third part of 26ai migration blog. We will upgrade here 2 node Clusterware with ASM in 19c. We will use newest 23.26.1 GoldImages from oracle support. This tutorial showing main steps how to perform upgrade, its not procedure to upgrade your production environment, please checkout official documentation from oracle and do proper planning and prechecks before you do it.

This is third from 3 Articles:

- Database upgrade to AI Database 26ai (23.26.1) for Linux x86-64 upgrade from 19C in 3 steps using gold images.

- Oracle GridInfrastructure 26ai/ ASM upgrade from 19c one node.

- Grid Clusterware 26ai 2 node RAC upgrade from 19c rolling forward

Step 1. Downloading software and preparation

Download Base Gold image from Oracle support software from latest Oracle AI Database 26ai Grid Infrastructure (23.26.1) for Linux x86-64 (we upgrading on Oracle LInux 9)

https://www.oracle.com/database/technologies/oracle26ai-linux-downloads.html

or

If you have access to new support web site, use this document to get always newest updated version (Recommended), there is new update every 3 months.

Oracle AI Database 26ai Proactive Patch Information KB153394

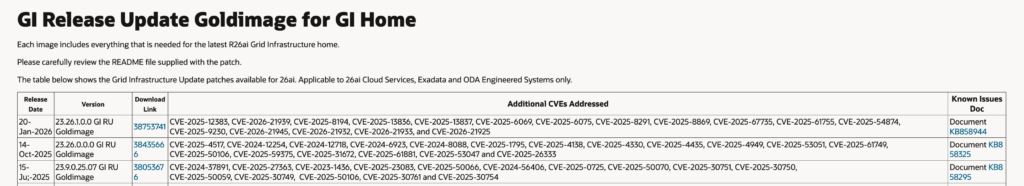

Download latest GoldImage release update, at the time writing this newest ones was Jan 2026. 38753741

Meanwhile downloading you can read documentation 🙂

https://updates.oracle.com/Orion/Services/download?type=readme&aru=28449739

- In this tutorial we will not use Oracle Fleet Patching and Provisioning (Oracle FPP)

- we will use traditional gridSetup.sh in silent mode and root scripts to switch has/crs to new home.

Step 2. Update your linux and install oracle preinstall rpm.

yum update

yum install oracle-ai-database-preinstall-26ai

reboot In my case LINUX was not updates since a while so i have more then 100 rpms to update including kernel so i also rebooted my linux box.

Install 7 Packages

Upgrade 102 Packages

Remove 7 Packages

Total download size: 1.3 G

oracle-ai-database-preinstall-26ai this rpm is installing all dependencies need for oracle 26ai , in case you installed earlier versions there is not much to do , but better have it installed, as future updates may add some dependencies, if you not using Oracle Linux , please check documentation which exactly rpms are needed.

- Unpack our downloaded database gold image software.

mkdir -p /u03/app/grid26/

unzip /u02/inst/26ai/p38753741_230000_Linux-x86-64.zip -d /u03/app/grid26/

cd /u03/app/grid26/

Step 3. Setup new home and prechecks

oracle@racnode1 grid26]$ ./gridSetup.sh -silent -setupHome -OSDBA oinstall -OSASM dba -ORACLE_BASE /u01/app/oracle -executePrereqs

Launching Oracle Grid Infrastructure Setup Wizard...

*********************************************

Swap Size: This is a prerequisite condition to test whether sufficient total swap space is available on the system.

Severity: IGNORABLE

Overall status: VERIFICATION_FAILED

Error message: PRVF-7573 : Sufficient swap size is not available on node "racnode1" [Required = 8.4861GB (8898344.0KB) ; Found = 2GB (2097148.0KB)]

Cause: The swap size found does not meet the minimum requirement.

Action: Increase swap size to at least meet the minimum swap space requirement.

-----------------------------------------------

[WARNING] [INS-13014] Target environment does not meet some optional requirements.

CAUSE: Some of the optional prerequisites are not met. See logs for details. /u01/app/oraInventory/logs/GridSetupActions2026-02-20_06-12-50PM/gridSetupActions2026-02-20_06-12-50PM.log.

ACTION: Identify the list of failed prerequisite checks from the log: /u01/app/oraInventory/logs/GridSetupActions2026-02-20_06-12-50PM/gridSetupActions2026-02-20_06-12-50PM.log. Then either from the log file or from installation manual find the appropriate configuration to meet the prerequisites and fix it manually.

Prechecks for ‘setuphome’ showing only issue with swap space(this we ignoring on our test machine)

All other prechecks we will do before Grid upgrade and run cluracvry from new grid home already, so we first only install grid software on both nodes.

In this process we will do rolling upgrades on each node, so your database stay online always at least on one node.

Make sure your databases/pdbs services and failover options are properly configured and applications using then.

Now lets setup new grid home

/gridSetup.sh -silent -setupHome -OSDBA oinstall -OSASM dba -ORACLE_BASE /u01/app/oracle

[oracle@racnode1 grid26]$ ./gridSetup.sh -silent -setupHome -OSDBA oinstall -OSASM dba -ORACLE_BASE /u01/app/oracle

Launching Oracle Grid Infrastructure Setup Wizard...

*********************************************

Swap Size: This is a prerequisite condition to test whether sufficient total swap space is available on the system.

Severity: IGNORABLE

Overall status: VERIFICATION_FAILED

Error message: PRVF-7573 : Sufficient swap size is not available on node "racnode1" [Required = 8.4861GB (8898344.0KB) ; Found = 2GB (2097148.0KB)]

Cause: The swap size found does not meet the minimum requirement.

Action: Increase swap size to at least meet the minimum swap space requirement.

-----------------------------------------------

The response file for this session can be found at:

/u03/app/grid26/install/response/grid_2026-02-20_06-14-20PM.rsp

You can find the log of this install session at:

/u01/app/oraInventory/logs/GridSetupActions2026-02-20_06-14-20PM/gridSetupActions2026-02-20_06-14-20PM.log

As a root user, run the following script(s):

1. /u03/app/grid26/root.sh

Run /u03/app/grid26/root.sh on the following nodes:

[racnode1]

Successfully Setup Software.

now as root user execute /u03/app/grid26/root.sh

[root@racnode1 ~]# /u03/app/grid26/root.sh

Check /u03/app/grid26/install/root_racnode1_2026-02-20_18-23-51-043070049.log for the output of root script

[root@racnode1 ~]# cat /u03/app/grid26/install/root_racnode1_2026-02-20_18-23-51-043070049.log

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /u03/app/grid26

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

To configure Grid Infrastructure for a Cluster or Grid Infrastructure for a Stand-Alone Server execute the following command as oracle user:

/u03/app/grid26/gridSetup.sh

This command launches the Grid Infrastructure Setup Wizard. The wizard also supports silent operation, and the parameters can be passed through the response file that is available in the installation media.

do the same steps on rac node 2

Now we have grid 26ai software setup on both nodes, we can start prechecks before we perform upgrade. we will do 2 separate prechecks

/u03/app/grid26/bin/cluvfyrac.sh stage -pre crsinst -n racnode1

and

/u03/app/grid26/gridSetup.sh -silent -upgrade -executePrereqsoutputs of this prechecks are quite long lists You should change most checks marked as ‘PASSED’ if they are ‘FAILED’ you should look into it and try to fix(not all FAILS means that you unable to do upgrade), in my case I would ignore SWAP space size (as this is test VM swap space size can be small)

[oracle@racnode1 grid26]$ /u03/app/grid26/bin/cluvfyrac.sh stage -pre crsinst -n racnode1

This software is "401" days old. It is a best practice to update the CRS home by downloading and applying the latest release update. Refer to MOS note 756671.1 for more details.

Performing following verification checks ...

Physical Memory ...PASSED

Available Physical Memory ...PASSED

Swap Size ...FAILED (PRVF-7573)

Free Space: racnode1:/usr,racnode1:/var,racnode1:/etc,racnode1:/sbin,racnode1:/tmp ...PASSED

Free Space: racnode1:/u01/grid ...PASSED

User Existence: oracle ...

Users With Same UID: 54321 ...PASSED

User Existence: oracle ...PASSED

...

...In my case there were 2 main fails which make whole upgrade impossible if not fix earlier, diskgroups RDBMS compatibility parameter and ASM disks group ownership:

*********************************************

Disk group RDBMS compatibility setting: Check for disk group RDBMS compatibility setting

Severity: FATAL

Overall status: VERIFICATION_FAILED

Error message: PRVE-3180 : RDBMS compatibility for ASM disk group "DATA" is set to "10.1.0.0.0", which is less than the minimum supported value "19.0.0.0.0".

Cause: A query showed that the ASM disk group attribute "compatible.rdbms"

for the indicated disk group was set to a value less than the

minimum supported value.

Action: Ensure that the RDBMS compatibility of the indicated disk group is

set to a value greater than or equal to the indicated minimum

supported value by running the command ''asmcmd setattr -G

<diskgroup> compatible.rdbms <value>''.

-----------------------------------------------

Error message: PRVE-3180 : RDBMS compatibility for ASM disk group "DATA" is set to "10.1.0.0.0", which is less than the minimum supported value "19.0.0.0.0".

Cause: A query showed that the ASM disk group attribute "compatible.rdbms"

for the indicated disk group was set to a value less than the

minimum supported value.

Action: Ensure that the RDBMS compatibility of the indicated disk group is

set to a value greater than or equal to the indicated minimum

supported value by running the command ''asmcmd setattr -G

<diskgroup> compatible.rdbms <value>''.

*********************************************

Device Checks for ASM: This is a prerequisite check to verify that the specified devices meet the requirements for ASM.

Severity: FATAL

Overall status: VERIFICATION_FAILED

-----------------------------------------------

-----------------------------------------------

Error message: PRVF-9992 : Group of device "/dev/asm-disk4" did not match the expected group. [Expected = "oinstall"; Found = "dba"] on nodes: [racnode2, racnode1]

Cause: Group of the device listed was different than required group.

Action: Change the group of the device listed or specify a different device.

Error message: PRVF-9992 : Group of device "/dev/asm-disk5" did not match the expected group. [Expected = "oinstall"; Found = "dba"] on nodes: [racnode2, racnode1]

Cause: Group of the device listed was different than required group.

Action: Change the group of the device listed or specify a different device.

Error message: PRVF-9992 : Group of device "/dev/asm-disk1" did not match the expected group. [Expected = "oinstall"; Found = "dba"] on nodes: [racnode2, racnode1]

Cause: Group of the device listed was different than required group.

Action: Change the group of the device listed or specify a different device.

Error message: PRVF-9992 : Group of device "/dev/asm-disk2" did not match the expected group. [Expected = "oinstall"; Found = "dba"] on nodes: [racnode2, racnode1]

Cause: Group of the device listed was different than required group.

Action: Change the group of the device listed or specify a different device.

*********************************************

Access Control List check: This check verifies that the ownership and permissions are correct and consistent for the devices across nodes.

Severity: CRITICAL

Overall status: VERIFICATION_FAILED

-----------------------------------------------

-----------------------------------------------

quick fix:

1. Diskgroups RDBMS compatibility parameter set to minimum 19

[oracle@racnode1 ~]$ asmcmd setattr -G DATA compatible.rdbms 19.0.0.0.0

2. ASM disks: group change for oinstall

my asm disks are udev disks, so group ownership i can change in /etc/udev/rules.d/99-oracle-asmdevices.rules:

vi /etc/udev/rules.d/99-oracle-asmdevices.rules

KERNEL=="vdc1", SYMLINK+="asm-disk1", OWNER="oracle", GROUP="oinstall", MODE="0660"

KERNEL=="vdd1", SYMLINK+="asm-disk2", OWNER="oracle", GROUP="oinstall", MODE="0660"

...

and we reboot the server. (please stop your database before using srvctl stop database instance on the node you work)

after server startup up , start instance and repeat step 2 also on RAC node 2, now we good to go with main upgrade:

STEP 4. UPGRADE:

execute on node 1 :

[oracle@racnode1 grid26]$ /u03/app/grid26/gridSetup.sh -silent -ignorePrereqFailure -upgrade

Launching Oracle Grid Infrastructure Setup Wizard...

[WARNING] [INS-13013] Target environment does not meet some mandatory requirements.

CAUSE: Some of the mandatory prerequisites are not met. See logs for details. /u01/app/oraInventory/logs/GridSetupActions2026-02-24_04-23-48PM/gridSetupActions2026-02-24_04-23-48PM.log.

ACTION: Identify the list of failed prerequisite checks from the log: /u01/app/oraInventory/logs/GridSetupActions2026-02-24_04-23-48PM/gridSetupActions2026-02-24_04-23-48PM.log. Then either from the log file or from installation manual find the appropriate configuration to meet the prerequisites and fix it manually.

The response file for this session can be found at:

/u03/app/grid26/install/response/grid_2026-02-24_04-23-48PM.rsp

As a root user, run the following script(s):

1. /u03/app/grid26/rootupgrade.sh

Run /u03/app/grid26/rootupgrade.sh on the following nodes:

[racnode1, racnode2]

Run the script on the node racnode1 first. After successful completion, you can run the script in parallel on all the other nodes, except a node you designate as the last node. When all the nodes except the last node are done successfully, run the script on the last node.

Successfully Setup Software with warning(s).

Run the 'gridSetup.sh -executeConfigTools' command to complete the configuration.

I use ignorePrereqFailure parameter as I would like to oracle ignore issue with swap space and some permissions checks.

now we execute as root: u03/app/grid26/rootupgrade.sh

This is main upgrade process which will switch all stack Grid processes to new home and start also ASM in 26ai version.

[root@racnode1 ~]# /u03/app/grid26/rootupgrade.sh

Check /u03/app/grid26/install/root_racnode1_2026-02-24_16-32-24-369106275.log for the output of root script

[root@racnode1 ~]# cat /u03/app/grid26/install/root_racnode1_2026-02-24_16-32-24-369106275.log

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /u03/app/grid26

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

RAC option is not linked in

Relinking oracle with rac_on option

Executing command '/u03/app/grid26/perl/bin/perl -I/u03/app/grid26/perl/lib -I/u03/app/grid26/crs/install /u03/app/grid26/crs/install/rootcrs.pl -upgrade'

Using configuration parameter file: /u03/app/grid26/crs/install/crsconfig_params

The log of current session can be found at:

/u01/app/oracle/crsdata/racnode1/crsconfig/crsupgrade_racnode1_2026-02-24_04-32-46PM.log

2026/02/24 16:32:55 CLSRSC-595: Executing upgrade step 1 of 16: 'UpgradeTFA'.

2026/02/24 16:32:55 CLSRSC-4015: Performing install or upgrade action for Oracle Autonomous Health Framework (AHF).

2026/02/24 16:32:55 CLSRSC-4012: Shutting down Oracle Autonomous Health Framework (AHF).

2026/02/24 16:33:09 CLSRSC-4013: Successfully shut down Oracle Autonomous Health Framework (AHF).

2026/02/24 16:33:09 CLSRSC-595: Executing upgrade step 2 of 16: 'ValidateEnv'.

2026/02/24 16:33:09 CLSRSC-363: User ignored prerequisites during installation

2026/02/24 16:33:10 CLSRSC-595: Executing upgrade step 3 of 16: 'GetOldConfig'.

2026/02/24 16:33:10 CLSRSC-692: Checking whether CRS entities are ready for upgrade. This operation may take a few minutes.

2026/02/24 16:34:23 CLSRSC-4003: Successfully patched Oracle Autonomous Health Framework (AHF).

2026/02/24 16:34:50 CLSRSC-693: CRS entities validation completed successfully.

2026/02/24 16:34:50 CLSRSC-464: Starting retrieval of the cluster configuration data

NAME=ora.qosmserver

TYPE=ora.qosmserver.type

TARGET=ONLINE

STATE=ONLINE on racnode2

CRS-4672: Successfully backed up the Voting File for Cluster Synchronization Service.

2026/02/24 16:34:59 CLSRSC-515: Starting OCR manual backup.

2026/02/24 16:35:03 CLSRSC-516: OCR manual backup successful.

2026/02/24 16:35:07 CLSRSC-465: Retrieval of the cluster configuration data has successfully completed.

2026/02/24 16:35:07 CLSRSC-595: Executing upgrade step 4 of 16: 'UpgPrechecks'.

2026/02/24 16:35:14 CLSRSC-595: Executing upgrade step 5 of 16: 'SetupOSD'.

2026/02/24 16:35:14 CLSRSC-595: Executing upgrade step 6 of 16: 'PreUpgrade'.

2026/02/24 16:35:17 CLSRSC-486:

At this stage of upgrade, the OCR has changed.

Any attempt to downgrade the cluster after this point will require a complete cluster outage to restore the OCR.

2026/02/24 16:35:17 CLSRSC-541:

To downgrade the cluster:

1. All nodes that have been upgraded must be downgraded.

2026/02/24 16:35:17 CLSRSC-542:

2. Before downgrading the last node, the Grid Infrastructure stack on all other cluster nodes must be down.

2026/02/24 16:35:18 CLSRSC-468: Setting Oracle Clusterware and ASM to rolling migration mode

2026/02/24 16:35:18 CLSRSC-482: Running command: '/u01/grid/bin/crsctl start rollingupgrade 23.0.0.0.0'

CRS-1131: The cluster was successfully set to rolling upgrade mode.

2026/02/24 16:35:22 CLSRSC-482: Running command: '/u03/app/grid26/bin/asmca -silent -upgradeNodeASM -nonRolling false -oldCRSHome /u01/grid -oldCRSVersion 19.0.0.0.0 -firstNode true -startRolling false '

2026/02/24 16:35:24 CLSRSC-469: Successfully set Oracle Clusterware and ASM to rolling migration mode

2026/02/24 16:35:25 CLSRSC-466: Starting shutdown of the current Oracle Grid Infrastructure stack

2026/02/24 16:36:16 CLSRSC-467: Shutdown of the current Oracle Grid Infrastructure stack has successfully completed.

2026/02/24 16:36:18 CLSRSC-595: Executing upgrade step 7 of 16: 'CheckCRSConfig'.

2026/02/24 16:36:18 CLSRSC-595: Executing upgrade step 8 of 16: 'UpgradeOLR'.

2026/02/24 16:36:22 CLSRSC-595: Executing upgrade step 9 of 16: 'ConfigCHMOS'.

2026/02/24 16:36:22 CLSRSC-595: Executing upgrade step 10 of 16: 'createOHASD'.

2026/02/24 16:36:22 CLSRSC-595: Executing upgrade step 11 of 16: 'ConfigOHASD'.

2026/02/24 16:36:22 CLSRSC-329: Replacing Clusterware entries in file 'oracle-ohasd.service'

2026/02/24 16:36:31 CLSRSC-595: Executing upgrade step 12 of 16: 'InstallACFS'.

2026/02/24 16:36:48 CLSRSC-595: Executing upgrade step 13 of 16: 'RemoveKA'.

2026/02/24 16:36:48 CLSRSC-595: Executing upgrade step 14 of 16: 'UpgradeCluster'.

2026/02/24 16:37:31 CLSRSC-343: Successfully started Oracle Clusterware stack

clscfg: EXISTING configuration version 19 detected.

Successfully taken the backup of node specific configuration in OCR.

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

2026/02/24 16:37:41 CLSRSC-595: Executing upgrade step 15 of 16: 'UpgradeNode'.

2026/02/24 16:37:42 CLSRSC-474: Initiating upgrade of resource types

2026/02/24 16:37:47 CLSRSC-475: Upgrade of resource types successfully initiated.

2026/02/24 16:37:48 CLSRSC-595: Executing upgrade step 16 of 16: 'PostUpgrade'.

2026/02/24 16:37:54 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

we can check that our crs is running already from new home on node1

[root@racnode1 ~]# systemctl status oracle-ohasd.service

● oracle-ohasd.service - Oracle High Availability Services

Loaded: loaded (/etc/systemd/system/oracle-ohasd.service; enabled; preset: disabled)

Drop-In: /etc/systemd/system/oracle-ohasd.service.d

└─00_oracle-ohasd.conf

Active: active (running) since Tue 2026-02-24 16:36:24 CET; 8min ago

Main PID: 86773 (init.ohasd)

Tasks: 651 (limit: 52416)

Memory: 5.5G (peak: 6.4G)

CPU: 2min 53.156s

CGroup: /oracle.slice/oracle-ohasd.service

├─ 86773 /bin/sh /etc/oracle/scls_scr/racnode1/root/init.ohasd run ">/dev/null" "2>&1" "</dev/null"

├─ 91877 /u03/app/grid26/bin/ohasd.bin reboot _ORA_BLOCKING_STACK_LOCALE=AMERICAN_AMERICA.AL32UTF8

├─ 92028 /u03/app/grid26/bin/orarootagent.bin

├─ 92166 /u03/app/grid26/bin/oraagent.bin

├─ 92188 /u03/app/grid26/bin/mdnsd.bin

├─ 92190 /u03/app/grid26/bin/evmd.bin

├─ 92236 /u03/app/grid26/bin/gpnpd.bin

├─ 92288 /u03/app/grid26/bin/gipcd.bin

├─ 92308 /u03/app/grid26/bin/evmlogger.bin

├─ 92357 /u03/app/grid26/bin/cssdmonitor

├─ 92360 /u03/app/grid26/bin/osysmond.bin

├─ 92409 /u03/app/grid26/python/bin/python /u03/app/grid26/pylib/chmdiag.zip start -f "-n racnode1"

├─ 92415 /u03/app/grid26/bin/cssdagent

├─ 92450 /u03/app/grid26/bin/onmd.bin

├─ 92452 /u03/app/grid26/bin/ocssd.bin

├─ 92505 /u03/app/grid26/bin/crfelsnr -n racnode1

├─ 92569 /u03/app/grid26/bin/crsd.bin reboot

├─ 92725 /u03/app/grid26/bin/orarootagent.bin

normally you do whole upgrade in ONLINE mode rolling forward to each node, keep you database instance running, check if your db is properly up and running on upgraded node, then continue to the next one.

now we can continue on second node, exactly execute the same script:

[root@racnode2 ~]# /u03/app/grid26/rootupgrade.sh

Check /u03/app/grid26/install/root_racnode2_2026-02-24_16-47-22-723209560.log for the output of root script

[root@racnode2 ~]# cat /u03/app/grid26/install/root_racnode2_2026-02-24_16-47-22-723209560.log

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= oracle

ORACLE_HOME= /u03/app/grid26

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

RAC option is not linked in

Relinking oracle with rac_on option

Executing command '/u03/app/grid26/perl/bin/perl -I/u03/app/grid26/perl/lib -I/u03/app/grid26/crs/install /u03/app/grid26/crs/install/rootcrs.pl -upgrade'

Using configuration parameter file: /u03/app/grid26/crs/install/crsconfig_params

The log of current session can be found at:

/u01/app/oracle/crsdata/racnode2/crsconfig/crsupgrade_racnode2_2026-02-24_04-47-45PM.log

2026/02/24 16:47:51 CLSRSC-595: Executing upgrade step 1 of 16: 'UpgradeTFA'.

2026/02/24 16:47:51 CLSRSC-4015: Performing install or upgrade action for Oracle Autonomous Health Framework (AHF).

2026/02/24 16:47:51 CLSRSC-4012: Shutting down Oracle Autonomous Health Framework (AHF).

2026/02/24 16:48:05 CLSRSC-4013: Successfully shut down Oracle Autonomous Health Framework (AHF).

2026/02/24 16:48:05 CLSRSC-595: Executing upgrade step 2 of 16: 'ValidateEnv'.

2026/02/24 16:48:05 CLSRSC-363: User ignored prerequisites during installation

2026/02/24 16:48:06 CLSRSC-595: Executing upgrade step 3 of 16: 'GetOldConfig'.

2026/02/24 16:48:06 CLSRSC-464: Starting retrieval of the cluster configuration data

2026/02/24 16:48:10 CLSRSC-465: Retrieval of the cluster configuration data has successfully completed.

2026/02/24 16:48:10 CLSRSC-595: Executing upgrade step 4 of 16: 'UpgPrechecks'.

2026/02/24 16:48:11 CLSRSC-595: Executing upgrade step 5 of 16: 'SetupOSD'.

2026/02/24 16:48:11 CLSRSC-595: Executing upgrade step 6 of 16: 'PreUpgrade'.

2026/02/24 16:48:14 CLSRSC-466: Starting shutdown of the current Oracle Grid Infrastructure stack

2026/02/24 16:48:55 CLSRSC-467: Shutdown of the current Oracle Grid Infrastructure stack has successfully completed.

2026/02/24 16:48:55 CLSRSC-595: Executing upgrade step 7 of 16: 'CheckCRSConfig'.

2026/02/24 16:48:56 CLSRSC-595: Executing upgrade step 8 of 16: 'UpgradeOLR'.

2026/02/24 16:49:04 CLSRSC-595: Executing upgrade step 9 of 16: 'ConfigCHMOS'.

2026/02/24 16:49:04 CLSRSC-595: Executing upgrade step 10 of 16: 'createOHASD'.

2026/02/24 16:49:05 CLSRSC-595: Executing upgrade step 11 of 16: 'ConfigOHASD'.

2026/02/24 16:49:05 CLSRSC-329: Replacing Clusterware entries in file 'oracle-ohasd.service'

2026/02/24 16:49:18 CLSRSC-595: Executing upgrade step 12 of 16: 'InstallACFS'.

2026/02/24 16:49:46 CLSRSC-595: Executing upgrade step 13 of 16: 'RemoveKA'.

2026/02/24 16:49:46 CLSRSC-595: Executing upgrade step 14 of 16: 'UpgradeCluster'.

2026/02/24 16:49:59 CLSRSC-4003: Successfully patched Oracle Autonomous Health Framework (AHF).

2026/02/24 16:50:34 CLSRSC-343: Successfully started Oracle Clusterware stack

clscfg: EXISTING configuration version 23 detected.

Successfully taken the backup of node specific configuration in OCR.

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

2026/02/24 16:50:42 CLSRSC-595: Executing upgrade step 15 of 16: 'UpgradeNode'.

Start upgrade invoked..

2026/02/24 16:50:45 CLSRSC-478: Setting Oracle Clusterware active version on the last node to be upgraded

2026/02/24 16:50:45 CLSRSC-482: Running command: '/u03/app/grid26/bin/crsctl set crs activeversion'

Started to upgrade the active version of Oracle Clusterware. This operation may take a few minutes.

Started to upgrade CSS.

Started to upgrade Oracle ASM.

Started to upgrade CRS.

CRS was successfully upgraded.

Started to upgrade Oracle ACFS.

Oracle ACFS was successfully upgraded.

Successfully upgraded the active version of Oracle Clusterware.

Oracle Clusterware active version was successfully set to 23.0.0.0.0.

2026/02/24 16:51:55 CLSRSC-479: Successfully set Oracle Clusterware active version

2026/02/24 16:51:58 CLSRSC-476: Finishing upgrade of resource types

2026/02/24 16:52:01 CLSRSC-477: Successfully completed upgrade of resource types

2026/02/24 16:52:19 CLSRSC-595: Executing upgrade step 16 of 16: 'PostUpgrade'.

Successfully updated XAG resources.

2026/02/24 16:52:28 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

DONE, our 2 node Grid Cluster has been upgraded on both nodes, at this stage both nodes should have up and running all processes.

Step 5. Verifying our configuration

[oracle@racnode1 grid26]$ ./gridSetup.sh -executeConfigTools

ERROR: Unable to verify the graphical display setup. This application requires X display. Make sure that xdpyinfo exist under PATH variable.

Launching Oracle Grid Infrastructure Setup Wizard...

You can find the logs of this session at:

/u01/app/oraInventory/logs/GridSetupActions2026-02-24_05-10-20PM

[ Start ] CVU - 2026-02-24 17:10:35.22

Command: /bin/sh -c /u03/app/grid26/bin/cluvfy stage -post crsinst -collect cluster -gi_upgrade -n all

Initializing ...

Performing following verification checks ...

Node Connectivity ...

Hosts File ...PASSED

Check that maximum (MTU) size packet goes through subnet ...PASSED

subnet mask consistency for subnet "192.168.100.0" ...PASSED

subnet mask consistency for subnet "192.168.101.0" ...PASSED

Node Connectivity ...PASSED

Multicast or broadcast check ...

Checking subnet "192.168.101.0" for multicast communication with multicast

group "224.0.0.251"

Subnet Network Type Multicast Enabled

------------ ------------------------ ------------------------

192.168.101.0 PRIVATE TRUE

Multicast or broadcast check ...PASSED

Time zone consistency ...PASSED

Path existence, ownership, permissions and attributes ...

Path "/var" ...PASSED

Path "/var/lib/oracle" ...PASSED

Path "/u01/app/oraInventory/ContentsXML/inventory.xml" ...PASSED

Path "/dev/asm" ...PASSED

Path "/dev/shm" ...PASSED

Path "/etc/init.d/ohasd" ...PASSED

Path "/etc/init.d/init.ohasd" ...PASSED

Path "/etc/init.d/init.tfa" ...PASSED

Path "/etc/oracle/maps" ...PASSED

Path "/etc/oraInst.loc" ...PASSED

Path "/etc/tmpfiles.d/oracleGI.conf" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/incident" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/incpkg" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/alert" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata_pv" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/stage" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/sweep" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/lck" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/log" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/cdump" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/trace" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata_dgif" ...PASSED

Path "/u03/app/grid26/gpnp/wallets/peer/cwallet.sso" ...PASSED

Path "/u03/app/grid26/gpnp/wallets/root/cwallet.sso" ...PASSED

Path "/u03/app/grid26/gpnp/profiles/peer/profile.xml" ...PASSED

Path existence, ownership, permissions and attributes ...PASSED

Cluster Manager Integrity ...PASSED

User Mask ...PASSED

Cluster Integrity ...PASSED

OCR Integrity ...PASSED

CRS Integrity ...

Clusterware Version Consistency ...PASSED

CRS Integrity ...PASSED

Node Application Existence ...PASSED

Single Client Access Name (SCAN) ...

DNS/NIS name service 'rac-scan' ...

Name Service Switch Configuration File Integrity ...PASSED

DNS/NIS name service 'rac-scan' ...PASSED

Single Client Access Name (SCAN) ...PASSED

OLR Integrity ...PASSED

Voting Disk ...PASSED

ASM Integrity ...PASSED

ASM Network ...PASSED

ASM disk group free space ...PASSED

User Not In Group "root": oracle ...PASSED

Clock Synchronization ...

Network Time Protocol (NTP) ...

Daemon 'ntpd' ...FAILED (PRVG-1024, PRVF-7590)

Daemon 'chronyd' ...FAILED (PRVG-1024, PRVF-7590)

Network Time Protocol (NTP) ...FAILED (PRVG-1024, PRVF-7590)

Clock Synchronization ...FAILED (PRVG-1024, PRVF-7590)

VIP Subnet configuration check ...PASSED

Oracle Net Services configuration ...PASSED

Network configuration consistency checks ...PASSED

Package: psmisc-22.6-19 ...PASSED

File system mount options for path GI_HOME ...PASSED

File system mount option hidepid for proc filesystem ...PASSED

Cleanup of communication socket files ...PASSED

Domain Sockets ...PASSED

Post-check for cluster services setup was unsuccessful.

Checks did not pass for the following nodes:

racnode2,racnode1

Failures were encountered during execution of CVU verification request "stage -post crsinst".

Clock Synchronization ...FAILED

Network Time Protocol (NTP) ...FAILED

Daemon 'ntpd' ...FAILED

PRVG-1024 : The NTP daemon or Service was not running on any of the cluster

nodes.

racnode2: PRVF-7590 : "ntpd" is not running on node "racnode2"

racnode2: Liveness check failed for "ntpd"

racnode1: PRVF-7590 : "ntpd" is not running on node "racnode1"

racnode1: Liveness check failed for "ntpd"

Daemon 'chronyd' ...FAILED

PRVG-1024 : The NTP daemon or Service was not running on any of the cluster

nodes.

racnode2: PRVF-7590 : "chronyd" is not running on node "racnode2"

racnode2: Liveness check failed for "chronyd"

racnode1: PRVF-7590 : "chronyd" is not running on node "racnode1"

racnode1: Liveness check failed for "chronyd"

CVU operation performed: stage -post crsinst

Date: Feb 24, 2026, 5:10:35 PM

CVU version: 23.26.1.0.0 (010926x8664)

Clusterware version: 23.0.0.0.0

CVU home: /u03/app/grid26

Grid home: /u03/app/grid26

User: oracle

Operating system: Linux5.15.0-317.197.5.1.el9uek.x86_64

Configuration failed.

[FATAL] [INS-20801] Configuration Assistant 'Oracle Cluster Verification Utility' failed.

ACTION: Refer to the logs or the extra details from below for additional information.

*ADDITIONAL INFORMATION:*

Command: /bin/sh -c /u03/app/grid26/bin/cluvfy stage -post crsinst -collect cluster -gi_upgrade -n all

...

...

Exit code: 1

[ Exit Code ] 1

[ End ] CVU - 2026-02-24 17:11:16.693

[WARNING] [INS-43080] Some of the configuration assistants failed, were cancelled or skipped.

ACTION: Refer to the logs or contact Oracle Support Services.

UPS… my verification failed due to ntp /chronyd daemon missing,

quick fix:

[root@racnode1 ~]# yum install chrony

Last metadata expiration check: 3:25:13 ago on Tue 24 Feb 2026 01:50:02 PM CET.

Dependencies resolved.

===============================================================================================================================================================================================

Package Architecture Version Repository Size

===============================================================================================================================================================================================

Installing:

chrony x86_64 4.6.1-2.0.1.el9 ol9_baseos_latest 362 k

Transaction Summary

===============================================================================================================================================================================================

Install 1 Package

Total download size: 362 k

Installed size: 668 k

Is this ok [y/N]: y

Downloading Packages:

chrony-4.6.1-2.0.1.el9.x86_64.rpm 782 kB/s | 362 kB 00:00

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Total 773 kB/s | 362 kB 00:00

Running transaction check

Transaction check succeeded.

Running transaction test

Transaction test succeeded.

Running transaction

Preparing : 1/1

Running scriptlet: chrony-4.6.1-2.0.1.el9.x86_64 1/1

Installing : chrony-4.6.1-2.0.1.el9.x86_64 1/1

Running scriptlet: chrony-4.6.1-2.0.1.el9.x86_64 1/1

Created symlink /etc/systemd/system/multi-user.target.wants/chronyd.service → /usr/lib/systemd/system/chronyd.service.

Verifying : chrony-4.6.1-2.0.1.el9.x86_64 1/1

Installed:

chrony-4.6.1-2.0.1.el9.x86_64

Complete!

[root@racnode2 ~]# systemctl start chronyd

[root@racnode2 ~]# systemctl enable chronyd

[root@racnode2 ~]# systemctl status chronyd

● chronyd.service - NTP client/server

Loaded: loaded (/usr/lib/systemd/system/chronyd.service; enabled; preset: enabled)

Active: active (running) since Tue 2026-02-24 17:20:35 CET; 26s ago

Docs: man:chronyd(8)

man:chrony.conf(5)

Main PID: 158712 (chronyd)

Tasks: 1 (limit: 52408)

Memory: 3.1M (peak: 3.8M)

CPU: 22ms

CGroup: /system.slice/chronyd.service

└─158712 /usr/sbin/chronyd -F 2

Feb 24 17:20:35 racnode2 systemd[1]: Starting NTP client/server...

Feb 24 17:20:35 racnode2 chronyd[158712]: chronyd version 4.6.1 starting (+CMDMON +NTP +REFCLOCK +RTC +PRIVDROP +SCFILTER +SIGND +ASYNCDNS +NTS +SECHASH +IPV6 +DEBUG)

Feb 24 17:20:35 racnode2 chronyd[158712]: Loaded 0 symmetric keys

Feb 24 17:20:35 racnode2 chronyd[158712]: Using right/UTC timezone to obtain leap second data

Feb 24 17:20:35 racnode2 chronyd[158712]: Loaded seccomp filter (level 2)

Feb 24 17:20:35 racnode2 systemd[1]: Started NTP client/server.

install also chronyd on second node, now we can run our postchecks again

[oracle@racnode1 grid26]$ ./gridSetup.sh -executeConfigTools

ERROR: Unable to verify the graphical display setup. This application requires X display. Make sure that xdpyinfo exist under PATH variable.

Launching Oracle Grid Infrastructure Setup Wizard...

You can find the logs of this session at:

/u01/app/oraInventory/logs/GridSetupActions2026-02-24_05-22-12PM

[ Start ] CVU - 2026-02-24 17:22:24.108

Command: /bin/sh -c /u03/app/grid26/bin/cluvfy stage -post crsinst -collect cluster -gi_upgrade -n all

Performing following verification checks ...

Node Connectivity ...

Hosts File ...PASSED

Check that maximum (MTU) size packet goes through subnet ...PASSED

subnet mask consistency for subnet "192.168.100.0" ...PASSED

subnet mask consistency for subnet "192.168.101.0" ...PASSED

Node Connectivity ...PASSED

Multicast or broadcast check ...

Checking subnet "192.168.101.0" for multicast communication with multicast

group "224.0.0.251"

Subnet Network Type Multicast Enabled

------------ ------------------------ ------------------------

192.168.101.0 PRIVATE TRUE

Multicast or broadcast check ...PASSED

Time zone consistency ...PASSED

Path existence, ownership, permissions and attributes ...

Path "/var" ...PASSED

Path "/var/lib/oracle" ...PASSED

Path "/u01/app/oraInventory/ContentsXML/inventory.xml" ...PASSED

Path "/dev/asm" ...PASSED

Path "/dev/shm" ...PASSED

Path "/etc/init.d/ohasd" ...PASSED

Path "/etc/init.d/init.ohasd" ...PASSED

Path "/etc/init.d/init.tfa" ...PASSED

Path "/etc/oracle/maps" ...PASSED

Path "/etc/oraInst.loc" ...PASSED

Path "/etc/tmpfiles.d/oracleGI.conf" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/incident" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/incpkg" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/alert" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata_pv" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/stage" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/sweep" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/lck" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/log" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/cdump" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/trace" ...PASSED

Path "/u01/app/oracle/diag/crs/racnode1/crs/metadata_dgif" ...PASSED

Path "/u03/app/grid26/gpnp/wallets/peer/cwallet.sso" ...PASSED

Path "/u03/app/grid26/gpnp/wallets/root/cwallet.sso" ...PASSED

Path "/u03/app/grid26/gpnp/profiles/peer/profile.xml" ...PASSED

Path existence, ownership, permissions and attributes ...PASSED

Cluster Manager Integrity ...PASSED

User Mask ...PASSED

Cluster Integrity ...PASSED

OCR Integrity ...PASSED

CRS Integrity ...

Clusterware Version Consistency ...PASSED

CRS Integrity ...PASSED

Node Application Existence ...PASSED

Single Client Access Name (SCAN) ...

DNS/NIS name service 'rac-scan' ...

Name Service Switch Configuration File Integrity ...PASSED

DNS/NIS name service 'rac-scan' ...PASSED

Single Client Access Name (SCAN) ...PASSED

OLR Integrity ...PASSED

Voting Disk ...PASSED

ASM Integrity ...PASSED

ASM Network ...PASSED

ASM disk group free space ...PASSED

User Not In Group "root": oracle ...PASSED

Clock Synchronization ...

Network Time Protocol (NTP) ...

Daemon 'chronyd' ...PASSED

NTP daemon or service using UDP port 123 ...PASSED

chrony daemon is synchronized with at least one external time source ...PASSED

Network Time Protocol (NTP) ...PASSED

Clock Synchronization ...PASSED

VIP Subnet configuration check ...PASSED

Oracle Net Services configuration ...PASSED

Network configuration consistency checks ...PASSED

Package: psmisc-22.6-19 ...PASSED

File system mount options for path GI_HOME ...PASSED

File system mount option hidepid for proc filesystem ...PASSED

Cleanup of communication socket files ...PASSED

Domain Sockets ...PASSED

Post-check for cluster services setup was successful.

CVU operation performed: stage -post crsinst

Date: Feb 24, 2026, 5:22:24 PM

CVU version: 23.26.1.0.0 (010926x8664)

Clusterware version: 23.0.0.0.0

CVU home: /u03/app/grid26

Grid home: /u03/app/grid26

User: oracle

Operating system: Linux5.15.0-317.197.5.1.el9uek.x86_64

Successfully Configured Software.

[ Exit Code ] 0

[ End ] CVU - 2026-02-24 17:23:01.076

Now all is up OK verification post check return successfull verification.

At this stage we have upgraded our grid home to newest version 26ai ,ASM, OCR and grid processes on 2 node Cluster, This was rolling forward method so it can be done online as , one instance is always up and running on remaining node.

Next step is to upgrade Oracle databases to 26ai home, I will explain this in next blog, generally process is very similar to one node upgrade Database upgrade to AI Database 26ai (23.26.1) for Linux x86-64 upgrade from 19C in 3 steps using gold images.

![Thumbnail [60x60]](https://www.dbi-services.com/blog/wp-content/uploads/2024/04/SIT_web.png)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2024/02/XFG-web.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2025/05/JDE_Web-1-scaled.jpg)

![Thumbnail [90x90]](https://www.dbi-services.com/blog/wp-content/uploads/2025/05/martin_bracher_2048x1536.jpg)